How RunAnywhere Grew AI Visibility from 2% to 28% in 3 Weeks

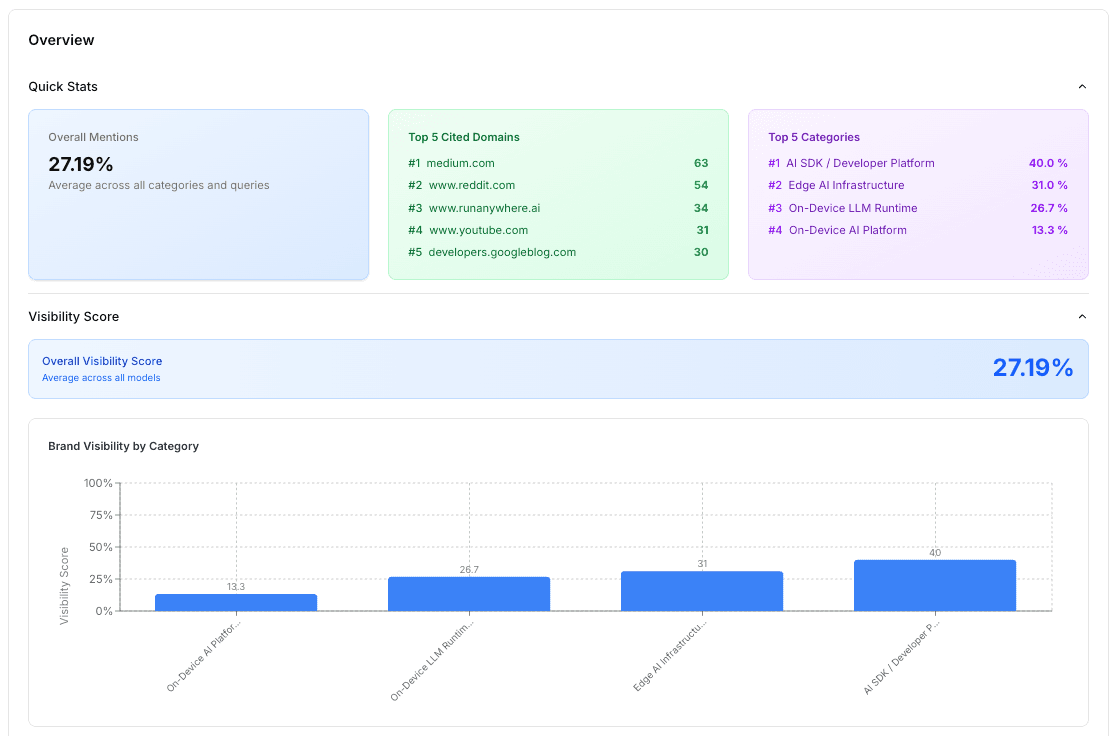

In 3 weeks, RunAnywhere went from minimal visibility in LLM-generated answers to being the #3 most cited domain across 5 AI platforms, turning AI search into a core discovery channel for developers and enterprises seeking on-device AI infrastructure.

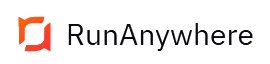

Key Results

+1,529% overall LLM visibility increase (1.67% → 27.19%)

#3 Most Cited Domain by LLMs across all queries

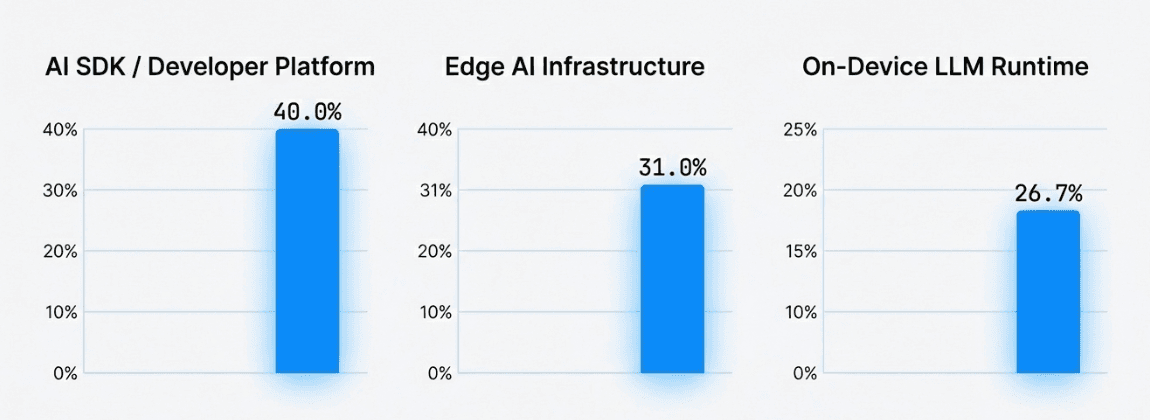

+40% Visibility in AI SDK / Developer Platform category

5 LLMs tracked: Grok, GPT, Perplexity, Claude, Google AI Mode

26 queries citing RunAnywhere URLs in final execution

About RunAnywhere

RunAnywhere is an on-device AI platform and SDK that enables developers to run large language models locally on mobile and edge devices without cloud dependency. Designed for latency-sensitive, privacy-first, and offline-capable applications, RunAnywhere lets teams deploy powerful AI directly on hardware.

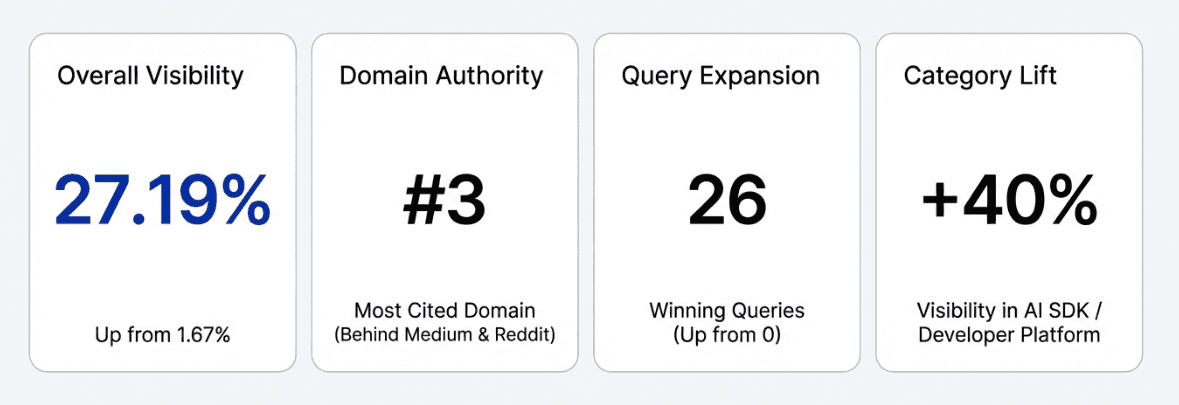

Despite strong technical differentiation, RunAnywhere had virtually low presence in AI-generated answers. When developers queried ChatGPT, Perplexity, or Grok for on-device AI tools, RunAnywhere wasn't mentioned — a critical gap in an era where AI search is becoming the primary discovery channel for developer tools.

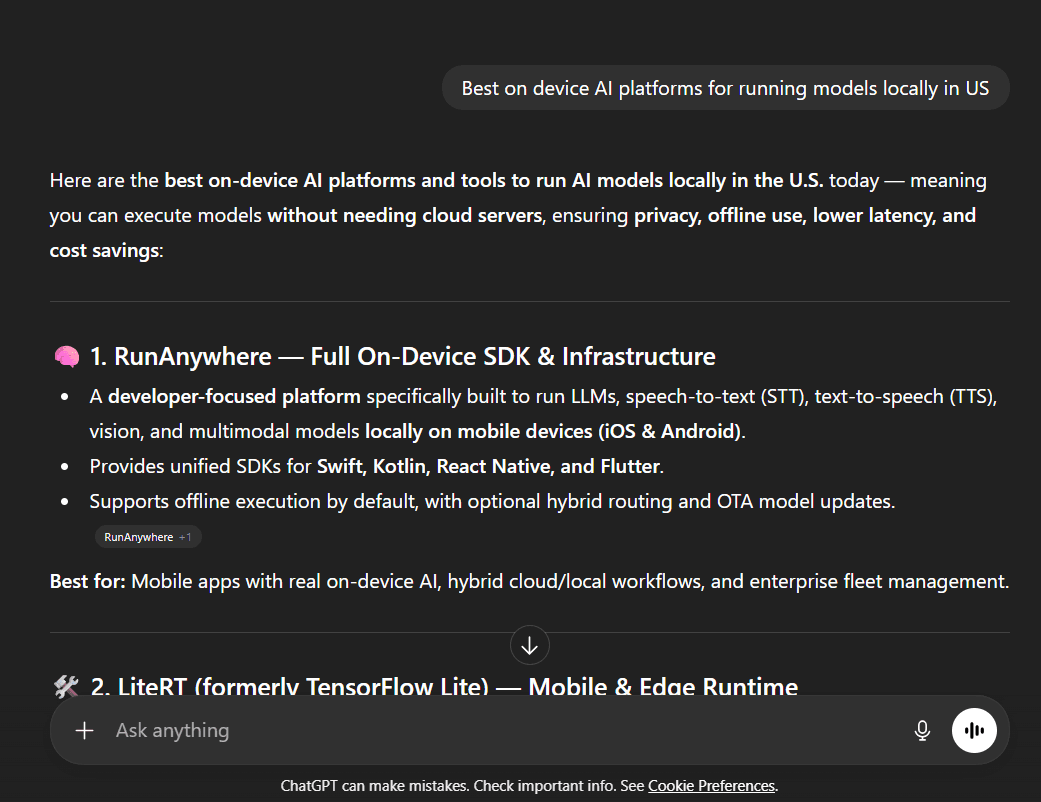

The Challenge: Low Visibility Where Buyers Were Looking

RunAnywhere's baseline measurement on January 27, 2026 confirmed the scale of the problem. Across 5 LLMs and 4 keyword categories central to their product, they had an overall mention rate of just 1.67%.

On the platform level, GPT Fast, Perplexity, Claude, and Google AI Mode all returned 0% mentions. Only Grok showed any signal at 10%. The domain runanywhere.ai was not cited by any LLM at all.

The Strategy: XLR8 AI's Optimization Playbook

XLR8 AI designed a structured, multi-layered pilot to build RunAnywhere's AI search presence from the ground up. The experiment covered 5 LLMs, 4 keyword categories, and 20 discovery queries, with weekly execution cycles to measure and compound results.

All experiments used discovery-type queries — the questions buyers ask at the top of the funnel. Examples:

"What are the best on-device AI platforms for running models locally?"

"Find me the best AI SDK for running models on mobile devices"

"Recommend an on-device LLM runtime with cloud fallback"

"Which edge AI infrastructure tools support real-time analytics?"

Pilot Optimization Actions

LLMs.txt Implementation — Created a structured data file that helps AI crawlers correctly categorize RunAnywhere's products and positioning. The foundational step for LLM discoverability.

5 Targeted Listicles — Published listicle-format articles optimized for the exact discovery queries being tracked. LLMs heavily favor this format for recommendation-style answers. Each piece positioned RunAnywhere alongside known alternatives in a way AI models are trained to draw from.

In-Depth Guide — A comprehensive implementation guide establishing RunAnywhere's authority in on-device AI infrastructure — the type of content LLMs cite for informational queries.

Press Release + Product Release — A Y Combinator announcement and SDK v0.17.5 release article to generate newsworthiness signals and increase the breadth of indexable RunAnywhere content.

GitHub Third-Party Citations (4 PRs, 2 Merged) — Contributed RunAnywhere to the Awesome-On-Device-AI GitHub repository — a resource already cited 32 times in the January baseline. Getting listed in trusted, high-authority third-party sources is one of the fastest ways to earn LLM citations through proxy authority.

Category Visibility by February

Domain Citation Momentum

Jan 27: runanywhere.ai — not cited by any LLM

Feb 13: runanywhere.ai — cited, ranked #17 among all domains

Feb 20: runanywhere.ai — ranked #3 among all cited domains, behind only medium.com and reddit.com

In the final execution, 12 RunAnywhere URLs were cited across 26 queries — a 300% increase in cited URLs from execution #2, and an expansion from 8 to 26 matching queries.

Why It Worked: The Three Drivers

Content Matched to How LLMs Retrieve

XLR8 AI's content strategy wasn't built for Google — it was built for LLM retrieval behavior. Listicle formats, comparison framing, and semantic alignment with exact discovery queries are the formats AI models are most likely to draw from. Five blog posts became regularly cited across 6–8 queries each within two weeks of publication.

Third-Party Authority Signals

GitHub repositories like Awesome-On-Device-AI are trusted by LLMs as high-signal aggregators. By getting RunAnywhere added to a repository already in 32 citations at baseline, XLR8 AI effectively borrowed existing LLM trust and redirected it toward RunAnywhere. Both merged pull requests showed citation lift in the very next execution.

Structural Discoverability (LLMs.txt)

Without a clear signal telling AI crawlers what RunAnywhere is and what category it belongs to, even good content would be miscategorized or ignored. The LLMs.txt implementation ensured that every new piece of content published was correctly attributed — turning a content volume play into a precise category ownership play.

"It's crazy!! I just like opened chatgpt.com on incognito and searched for best on device AI platforms for running models on the edge and mobile. And we are showing up! For some reason. I don't know how but that's insane actually, and it's the blog you guys wrote, that's crazy."

Sanchit Monga

Founder, RunAnywhere

Why AI Visibility is the New Competitive Moat

AI search optimization is fundamentally different from traditional SEO. Success requires understanding LLM retrieval behavior, structuring content for the formats AI models favor, building third-party authority across sources LLMs already trust, and moving fast as platforms update weekly.

RunAnywhere had a category-defining product. XLR8 AI ensured that when developers and engineering teams ask AI assistants which on-device AI platform to use — RunAnywhere isn't just listed. It's the recommendation.