Airtable AI Visibility Benchmark 2026: How Airtable Appears Across ChatGPT, Gemini & Claude

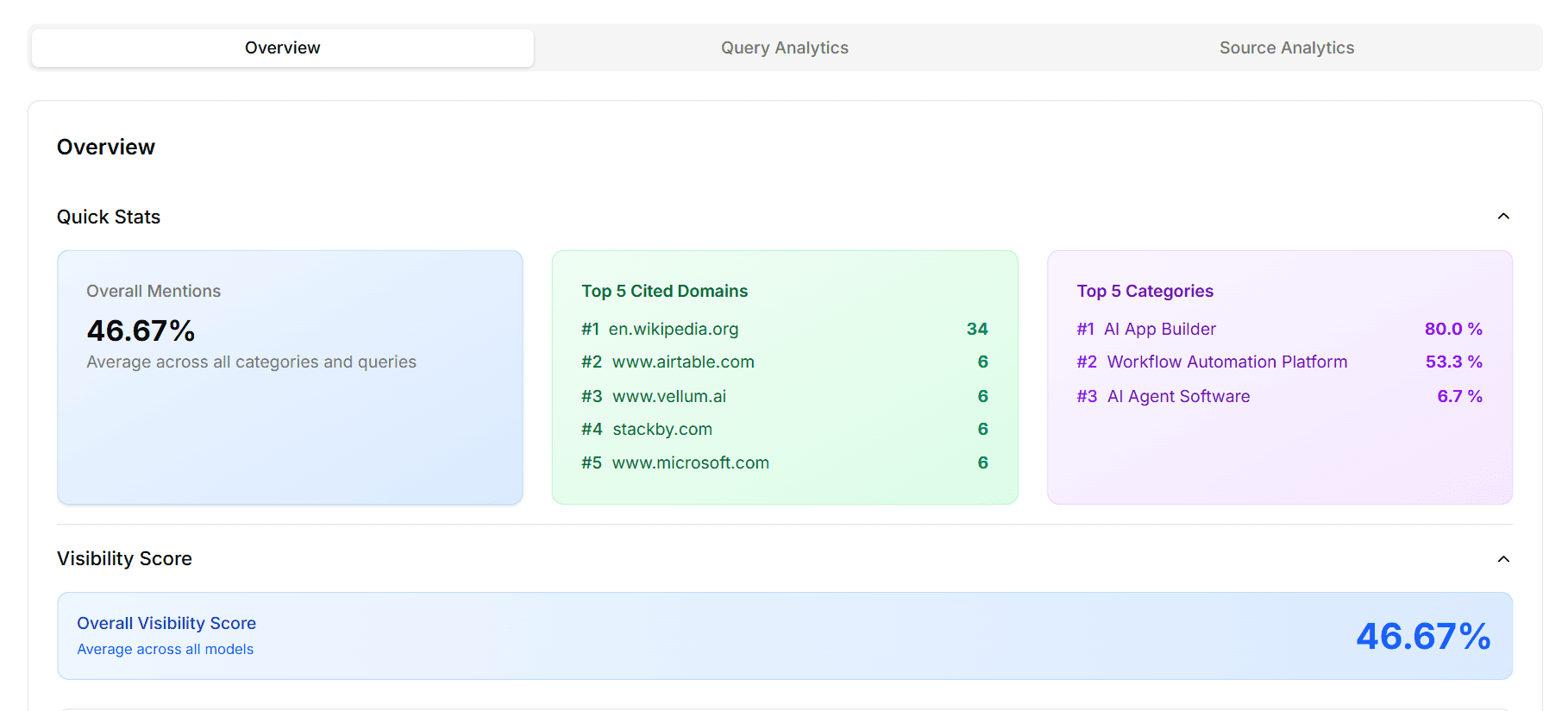

We ran 45 structured queries across three major AI models to measure how often Airtable gets recommended when buyers ask about AI app builders, workflow automation, and AI agent software. The headline: strong legacy recognition in one category and near-total invisibility in the category that matters most in 2026.

Key Numbers at a Glance

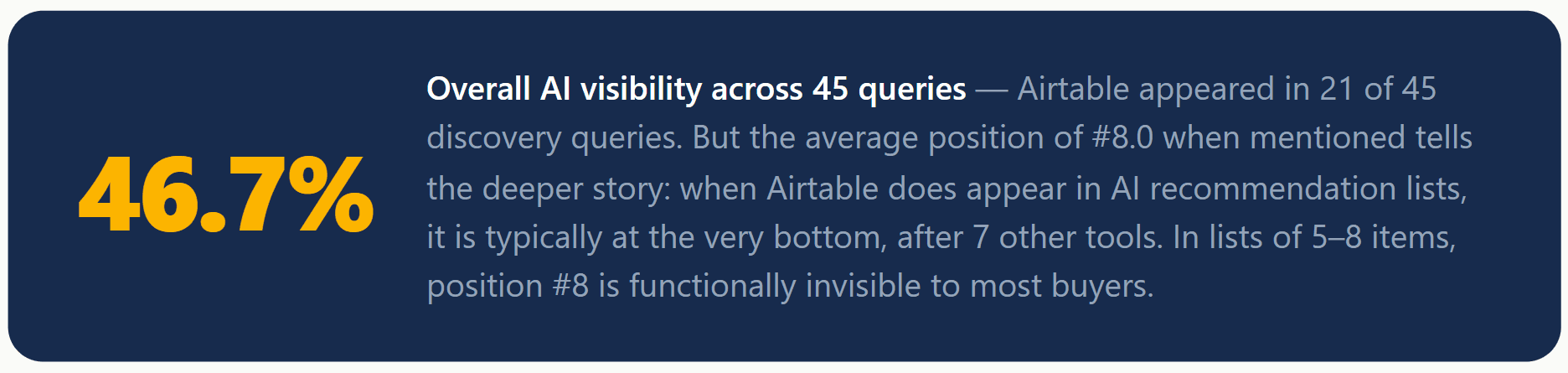

46.7%Overall visibility (21 of 45 queries)

#8.0 Avg brand position when mentioned

21 Total mentions across all models

6.7%AI Agent visibility (1 of 15 queries)

Buyers searching for AI app builders, workflow automation platforms, and AI agent software are increasingly asking ChatGPT, Gemini, and Claude for recommendations before they ever visit a vendor website. The short list an AI model returns often determines which tools enter serious evaluation and brands that appear deep in those lists, or not at all, quietly lose pipeline to newer, AI-native competitors.

To measure exactly where Airtable stands in this AI-driven discovery landscape, XLR8 AI ran a structured GEO (Generative Engine Optimization) benchmark on April 19, 2026 — 45 intent-aligned queries across GPT Fast (ChatGPT), Gemini, and Claude, spanning Airtable's three core positioning categories. The results reveal a brand with a genuine strength in one legacy category and an urgent visibility problem everywhere else.

Why Airtable's AI Visibility Matters in 2026

In 2026, AI assistants are acting as front doors to software discovery. For a platform like Airtable, which competes across spreadsheet databases, low-code app builders, workflow automation, and now AI agents, the way LLMs categorize and recommend the brand directly shapes whether it ends up in buyers' shortlists. Airtable's 46.7% overall visibility and average position of #8.0 signal that the brand is present in AI discovery — but rarely influential within it.

XLR8 AI's GEO benchmarks exist precisely to surface this kind of structural risk before it compounds into a pipeline problem. Airtable's near-zero visibility in AI agent queries (6.7%) is not an edge case — it is a signal that LLMs do not yet map Airtable to the AI-native category that is driving the most buyer intent in 2026.

Experiment Overview: Scope, Queries, and Methodology

XLR8 AI designed this benchmark using its AI visibility tracking platform. Here is exactly how it was run:

Total queries: 45 intent-aligned discovery queries across three categories

Models tested: GPT Fast / ChatGPT (15 queries), Claude (15 queries), Gemini (15 queries)

Categories: AI App Builder, Workflow Automation Platform, AI Agent Software

Metrics captured: Brand mention (yes/no), rank position when mentioned, competitor co-occurrences

Company: Airtable (airtable.com)

Date run: April 19, 2026

Note: This benchmark tested GPT Fast, Claude, and Gemini. Perplexity and Google AI Mode were not included in this experiment run. For each query, XLR8 AI's platform logged whether Airtable was mentioned, its rank position, and which competitor brands appeared in the same answer.

Headline Results: Airtable's 2026 AI Visibility Snapshot

Airtable's 46.7% overall visibility is well below what a category-defining platform should expect. More concerning is the average position of #8.0 — meaning Airtable is not just appearing less often than competitors, it is appearing last when it does show up. Combined with the 6.7% visibility in AI Agent Software queries, this benchmark paints a clear picture: Airtable's AI-native positioning is not yet resonating with LLMs.

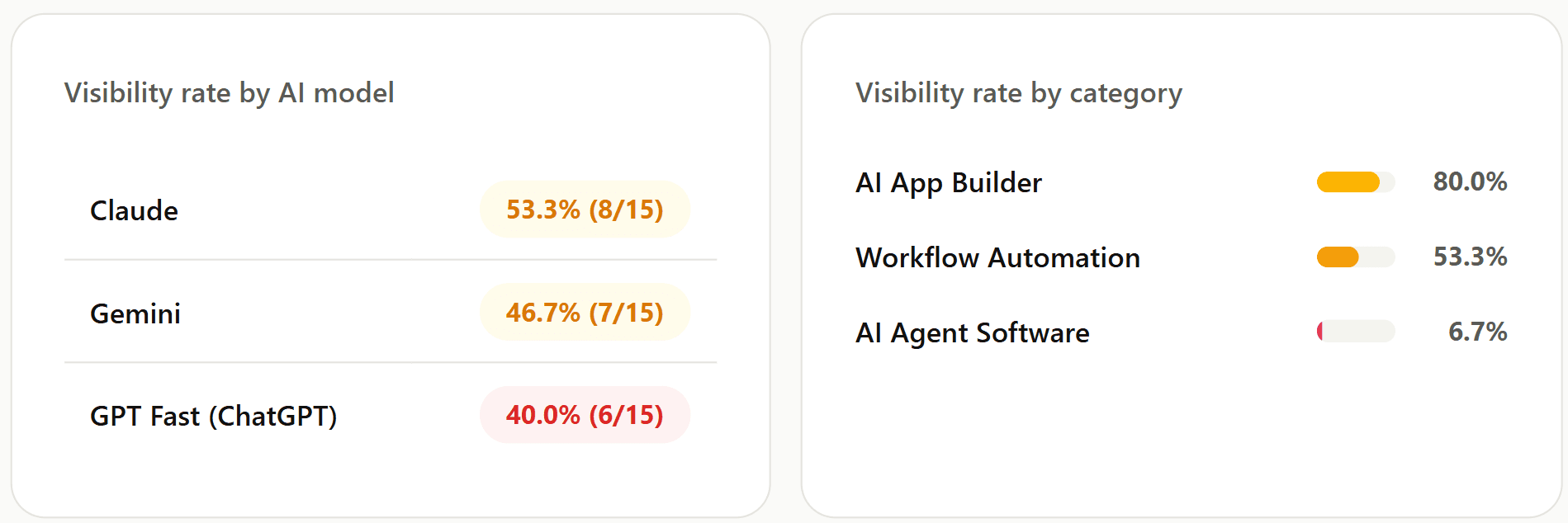

Model-Level Performance: ChatGPT, Gemini, and Claude

Claude: Airtable's Strongest Model at 53.3%

Claude delivered the highest visibility for Airtable, mentioning it in 8 of 15 queries (53.3%). Claude still maps Airtable to low-code app building and structured data workflows more consistently than the other models. However, even in Claude, Airtable's average position remains deep in recommendation lists — present but not prioritized. Claude is the strongest model for Airtable's GEO program to build from, but 53.3% still means Airtable misses nearly half of Claude's answers.

Gemini: Middle-of-the-Pack at 46.7%

Gemini mentioned Airtable in 7 of 15 queries (46.7%). Gemini recognizes Airtable in AI app builder and some workflow automation contexts, but defaults to purpose-built automation tools and enterprise platforms in the majority of queries. For Airtable, Gemini represents a reachable improvement target — its middle-ground performance suggests that relatively modest content and GEO investment could move it into the top tier of recommendations.

GPT Fast (ChatGPT): Airtable's Most Urgent Problem at 40%

Critical finding: ChatGPT mentions Airtable in only 40% of queries

GPT Fast is the most widely used AI research interface for software buyers — yet Airtable appears in only 6 of 15 queries (40%). XLR8 AI interprets this as a sign that ChatGPT's internal representation of Airtable is anchored to "spreadsheet" and "database" rather than AI-native automation or agents. In 9 of 15 ChatGPT queries, Airtable is not mentioned at all — replaced by Salesforce, Zapier, Microsoft, n8n, and newer AI agent platforms. Without targeted GEO work, Airtable risks ceding this channel entirely.

Category Performance: One Stronghold, Two Crises

AI App Builder: 80% — Airtable's Only Category Leadership

Strength: Airtable is recognized as an AI app builder — this is the foundation to build from

In AI App Builder queries, Airtable achieved 80% visibility (12/15 queries) — the only category where it performs like a category leader. LLMs still associate Airtable with building custom applications on top of relational data. When users ask for low-code or AI-enhanced app builders, Airtable consistently appears. This is the GEO anchor that all future optimization work should extend, not replace.

Workflow Automation Platform: 53.3% — Competitive but Inconsistent

In Workflow Automation Platform queries, Airtable appeared in 8 of 15 queries (53.3%). This is a middle-ground result — Airtable is recognized as relevant in some automation contexts, but LLMs more consistently favor tools that market themselves primarily as integration or orchestration platforms (Zapier, Make, n8n). The 7 missed queries in this category are all prompts that emphasize AI-powered agents or intelligent orchestration — framing that Airtable's current content ecosystem does not yet own.

AI Agent Software: 6.7% — Near-Total Invisibility

Critical finding: Airtable appears in just 1 of 15 AI Agent queries

This is the benchmark's most urgent finding. Airtable appeared in only 1 of 15 AI Agent Software queries (6.7%) — effectively invisible in the fastest-growing query category in the dataset. Across all three models, AI agent tools including n8n, Lindy, Gumloop, Zapier, Make, and Salesforce have entirely displaced Airtable. LLMs do not currently map Airtable to the AI agent category. For a platform that has invested in AI-native capabilities, this gap between product reality and LLM perception is the most strategically dangerous finding in this benchmark.

The Average Position Problem: #8.0 Means the Bottom of Every List

An average position of #8.0 deserves its own section because it changes how the overall 46.7% visibility number should be interpreted. A brand that appears in 46.7% of queries at position #2 is in a very different situation from a brand that appears in 46.7% of queries at position #8.

Most AI-generated recommendation lists contain between 5 and 8 tools. A brand ranked #8 is at the very last position — or beyond the typical list length entirely. XLR8 AI's interpretation is that when Airtable does appear in AI answers, it appears as an afterthought: a tool the model includes for completeness rather than as a primary recommendation. In the context of buyer shortlists, this means Airtable is technically counted but functionally overlooked.

Position #8 means Airtable is present in AI answers — but absent from buyer decisions

Software buyers using ChatGPT, Gemini, or Claude to build a shortlist typically act on the first 3–5 tools in a recommendation list. A brand consistently appearing at position #8 may be indexed in LLM training data, but it is not shaping purchase decisions. XLR8 AI considers closing the position gap — not just increasing mention rate — to be as important as improving overall visibility for Airtable's GEO program.

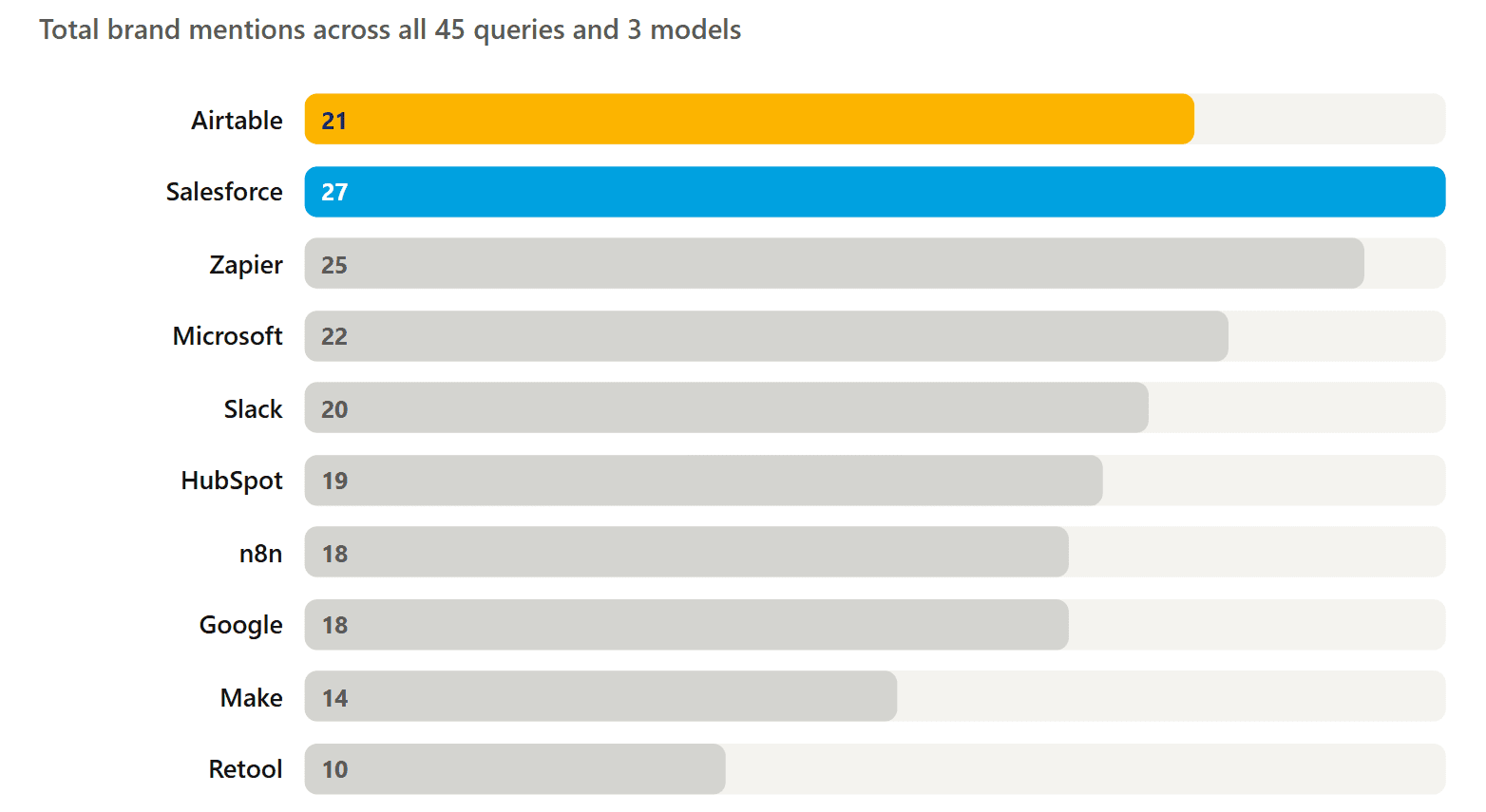

Competitive Landscape: Who Displaces Airtable in AI Answers

XLR8 AI's benchmark tracked which competitors appeared most frequently across all 45 queries. The brands that dominate these lists are the ones shaping the AI-native consideration set that Airtable aims to influence.

Salesforce and Zapier Own the Top Two Slots

Salesforce appeared 27 times and Zapier 25 times — both ahead of Airtable's 21 mentions despite Airtable being the brand under study. These two platforms are treated by LLMs as default anchors for workflow orchestration and enterprise app building, respectively. For Airtable, this means the primary competitive fight is not against Retool or Baserow — it is against the established category leaders that LLMs use as reference points when building recommendation lists.

Microsoft's Dominant Combined Presence

Microsoft — including Power Automate, Azure, Microsoft 365, and Dataverse — appears across 22+ combined mentions. LLMs treat Microsoft's ecosystem as the default enterprise workflow and automation layer, particularly in queries about scalable enterprise platforms. This is a structural challenge for Airtable in enterprise-intent queries: Microsoft's brand authority and ecosystem depth give it a permanent presence in LLM answers for these categories.

The AI Agent Natives: n8n, Lindy, and Gumloop

Perhaps the most telling competitive signal is the appearance of newer AI agent platforms — n8n (18 mentions), Lindy (9), and Gumloop (8) — all of which outperform Airtable in the AI Agent Software category. These tools have built their entire brand narrative around agentic automation, and LLMs reflect that. XLR8 AI sees this as Airtable's most urgent competitive threat: purpose-built AI agent platforms are establishing LLM mindshare in the category before Airtable has had a chance to claim it.

5 Key Findings from the Airtable GEO Benchmark

AI App Builder is Airtable's only strong category — and must serve as the GEO anchor

80% visibility in AI App Builder is the benchmark's one bright spot. LLMs still reliably associate Airtable with building custom applications on structured relational data. This legacy strength is the foundation all GEO investment must extend — not replace. Airtable needs to use this category credibility as the bridge into workflow automation and AI agent narratives, rather than abandoning it for a pure pivot to AI-native positioning.

AI Agent Software at 6.7% is an existential category risk

Appearing in 1 of 15 AI Agent Software queries means Airtable is effectively absent from the most-searched category in this benchmark. Tools like n8n, Lindy, Gumloop, Zapier, and Make have established LLM associations with agentic automation that Airtable simply does not have yet. If Airtable's product roadmap is pointing toward AI agents and orchestration, this GEO gap means buyers will not discover that through AI assistants — the primary research channel in 2026.

Position #8.0 is as damaging as low visibility rate

Even the 46.7% of queries where Airtable does appear are not delivering meaningful influence. At position #8, Airtable is at the bottom of every list — after Salesforce, Zapier, Microsoft, Make, and multiple AI agent tools. XLR8 AI treats improving average position from #8 to #3–4 as equally important to improving overall mention rate. Both gaps need to close for Airtable to generate real pipeline impact from AI-mediated discovery.

ChatGPT is Airtable's weakest model — and the highest-priority channel to fix

GPT Fast's 40% visibility rate is the lowest of the three models and the most commercially significant gap. ChatGPT is the most widely used AI research interface for software evaluation. Airtable appearing in only 6 of 15 ChatGPT queries means the majority of buyers using ChatGPT to research this category never see Airtable in the answer. Shifting ChatGPT's internal representation of Airtable from "database" to "AI app builder and automation platform" is the highest-leverage GEO action available.

Salesforce and Zapier outrank Airtable in its own experiment — a share of voice crisis

Salesforce (27 mentions) and Zapier (25 mentions) both exceed Airtable's total mentions (21) across the full query set. In a benchmark designed around Airtable's own product categories, Airtable is not even the most-mentioned brand. This is a share of voice crisis that reflects a fundamental mismatch between how Airtable describes itself and how LLMs categorize it when answering buyer queries.

5 GEO Strategies Airtable Should Execute

Build a dedicated AI agent narrative from the ground up

The 6.7% AI Agent Software visibility cannot be fixed with incremental content updates. Airtable needs a dedicated, structured AI agent narrative: specific use cases (agents that read and write to Airtable bases, orchestrate multi-step operations, coordinate across Slack and Salesforce), detailed integration guides, and third-party examples of agents built on Airtable. LLMs need explicit, structured evidence to connect Airtable with agentic automation — and right now, almost none exists. This is the #1 priority in the entire benchmark.

Reframe Airtable as an automation and orchestration layer — not just a database

LLMs currently anchor Airtable to "spreadsheet" and "relational database." To improve Workflow Automation visibility from 53.3% and AI Agent visibility from 6.7%, Airtable's product pages, documentation, and solution content need to foreground automation capabilities: triggers, actions, AI workflows, cross-tool integrations. Use the exact terminology LLMs search for — "workflow automation," "AI-powered automation," "multi-step orchestration" — in H1s, H2s, and FAQs so models can re-categorize Airtable in their recommendation logic.

Protect and deepen the AI App Builder stronghold

80% visibility in AI App Builder is the one category Airtable owns. It must be actively maintained: fresh case studies of AI-powered apps built on Airtable, updated documentation that emphasizes AI capabilities alongside the relational data layer, and schema markup (SoftwareApplication, FAQPage) on key product pages. This category is the bridge — strengthening it creates the authority base from which Airtable can credibly expand into automation and agent categories.

Invest in third-party and ecosystem content that LLMs can cite

The benchmark's citation data shows Wikipedia (34 cites), TechRadar (6), and partner/community domains as the primary sources LLMs pull from for this category. Airtable's on-site content is cited only 6 times — equal to several smaller competitor sites. The priority is to seed ecosystem content: partner integration guides, community-built agent templates, editorial coverage on TechRadar and similar publications, and Reddit/forum discussions that explicitly describe Airtable's automation and AI agent capabilities. External signals are what shift LLM representations fastest.

Target ChatGPT specifically with structured, retrieval-optimized content

ChatGPT's 40% visibility — the lowest of the three models — requires dedicated attention. ChatGPT relies heavily on its training corpus and structured content when generating recommendations. Publish long-form, heading-rich guides with FAQ schema markup that explicitly compare Airtable to Zapier, Make, and n8n in automation contexts. Titles like "Airtable for workflow automation: how it compares to Zapier and n8n" create the co-occurrence signals that shift ChatGPT's category mapping for Airtable over time.

Key takeaways from this benchmark

46.7% overall visibility — Airtable is present in AI discovery but not influential within it

Average position #8.0 — when mentioned, Airtable appears at the bottom of recommendation lists

AI App Builder: 80% — the only strong category, and Airtable's essential GEO anchor

Workflow Automation: 53.3% — competitive but inconsistent, especially on agentic automation queries

AI Agent Software: 6.7% (1/15) — near-total invisibility in the fastest-growing query category

ChatGPT is Airtable's weakest model at 40% — the highest-priority channel to improve

Salesforce (27) and Zapier (25) both outrank Airtable (21) in total mentions across the experiment

n8n, Lindy, and Gumloop are establishing AI agent LLM mindshare that Airtable is not yet competing for

What Low-Code and Workflow Platforms Get From AI Visibility Benchmarking

Airtable's benchmark is representative of a challenge facing many established SaaS platforms in 2026: strong legacy recognition that has not yet translated into AI-native discovery. Here is what a structured GEO program delivers for platforms in this situation:

Visibility into silent displacement

Traditional analytics cannot show you when ChatGPT is recommending Zapier or n8n instead of you. XLR8 AI's benchmarks surface these patterns before they show up as lagging revenue signals.

Category re-mapping strategy

When LLMs categorize your brand incorrectly — as a database rather than an automation platform — GEO experiments show exactly which content and signals need to change to shift that association.

Rank position improvement, not just mentions

Appearing at position #8 is not the same as appearing at #2. XLR8 AI tracks average rank alongside visibility rate so teams can optimize for actual influence, not just technical presence.

Repeatable, data-driven GEO program

One benchmark is a snapshot. XLR8 AI's platform turns it into a recurring measurement framework — so Airtable can see which GEO initiatives move the needle across ChatGPT, Gemini, and Claude over time.

Frequently Asked Questions

What is an AI visibility benchmark for a platform like Airtable?

An AI visibility benchmark is a structured study of how often and how prominently a brand appears in AI-generated recommendation lists for specific buyer queries. For Airtable, XLR8 AI ran 45 queries across AI app builder, workflow automation, and AI agent software categories on GPT Fast, Claude, and Gemini on April 19, 2026, logging brand mentions, rank positions, and competitor co-occurrences to produce a verified visibility baseline.

Why does Airtable score so low in AI Agent Software queries?

Airtable's 6.7% visibility in AI Agent Software queries reflects a gap between the product's capabilities and how LLMs have been trained to categorize it. AI agent platforms like n8n, Lindy, Gumloop, and Zapier have built their entire brand narratives around agentic automation, and LLMs reflect that through higher recommendation frequency. Airtable's content, documentation, and ecosystem signals have not yet established the same association in LLMs' representation of the AI agent category. This is a solvable GEO problem, but it requires dedicated investment in agent-specific content and external signals.

Why does average position matter as much as mention rate?

Most AI-generated recommendation lists contain 5–8 tools. Buyers typically act on the first 3–5. A brand appearing at position #8 is technically present but functionally invisible — it will rarely influence a shortlist or purchase decision. XLR8 AI tracks average position alongside visibility rate because a brand with 80% visibility at position #8 has less commercial impact than a brand with 60% visibility at position #2. Both metrics need to improve for AI visibility to translate into pipeline.

How can Airtable improve its AI agent and workflow automation visibility?

Improving Airtable's AI agent and automation visibility requires aligning content, documentation, and external signals with how buyers and LLMs describe these categories. This means creating dedicated solution pages for AI agent workflows, publishing detailed integration examples with Zapier, Slack, and Salesforce, earning coverage on authoritative sources that LLMs cite, and using structured schema markup. XLR8 AI's platform can identify which specific content investments will move the needle fastest by tracking visibility changes after each initiative.

How did XLR8 AI run this benchmark?

XLR8 AI defined 45 intent-aligned discovery queries across three categories — AI App Builder, Workflow Automation Platform, and AI Agent Software — and executed them across GPT Fast (ChatGPT), Claude, and Gemini, 15 queries per model, on April 19, 2026. For each response, XLR8 AI's platform logged whether Airtable was mentioned, its rank position, and which competitors appeared. All numbers in this report were verified directly against the raw database records before publication.

Run this benchmark for your brand

XLR8 AI runs AI visibility benchmarks for SaaS, low-code, and enterprise automation platforms — tracking mentions, rank positions, and competitive share of voice across ChatGPT, Gemini, Claude, Perplexity, and Google AI. Get your baseline report and GEO roadmap.

Get your AI Visibility Benchmark →

Methodology note: This benchmark was conducted by XLR8 AI on April 19, 2026. 45 queries were run across three AI models: GPT Fast / ChatGPT (15 queries), Claude (15 queries), and Gemini (15 queries). Perplexity and Google AI Mode were not included in this experiment. Brand mentions were logged by XLR8 AI's automated visibility tracking platform. Average position reflects the mean rank when Airtable appeared in a multi-brand recommendation list. Competitor mention counts reflect co-occurrences in the same responses. Results are a point-in-time snapshot; LLM outputs change as models update. XLR8 AI (tryxlr8.ai) is a GEO tracking and optimization platform. This benchmark is published for educational and research purposes. All numbers were verified against raw database records before publication.