Ramp AI Visibility Benchmark 2026: How Ramp Appears Across ChatGPT, Perplexity & Google AI

We ran 44 structured queries across three major AI models to measure how often Ramp gets recommended when finance leaders ask about spend management, corporate cards, and finance automation. Strong overall numbers — and a critical Perplexity gap in finance automation that opens the door for Brex and Airbase.

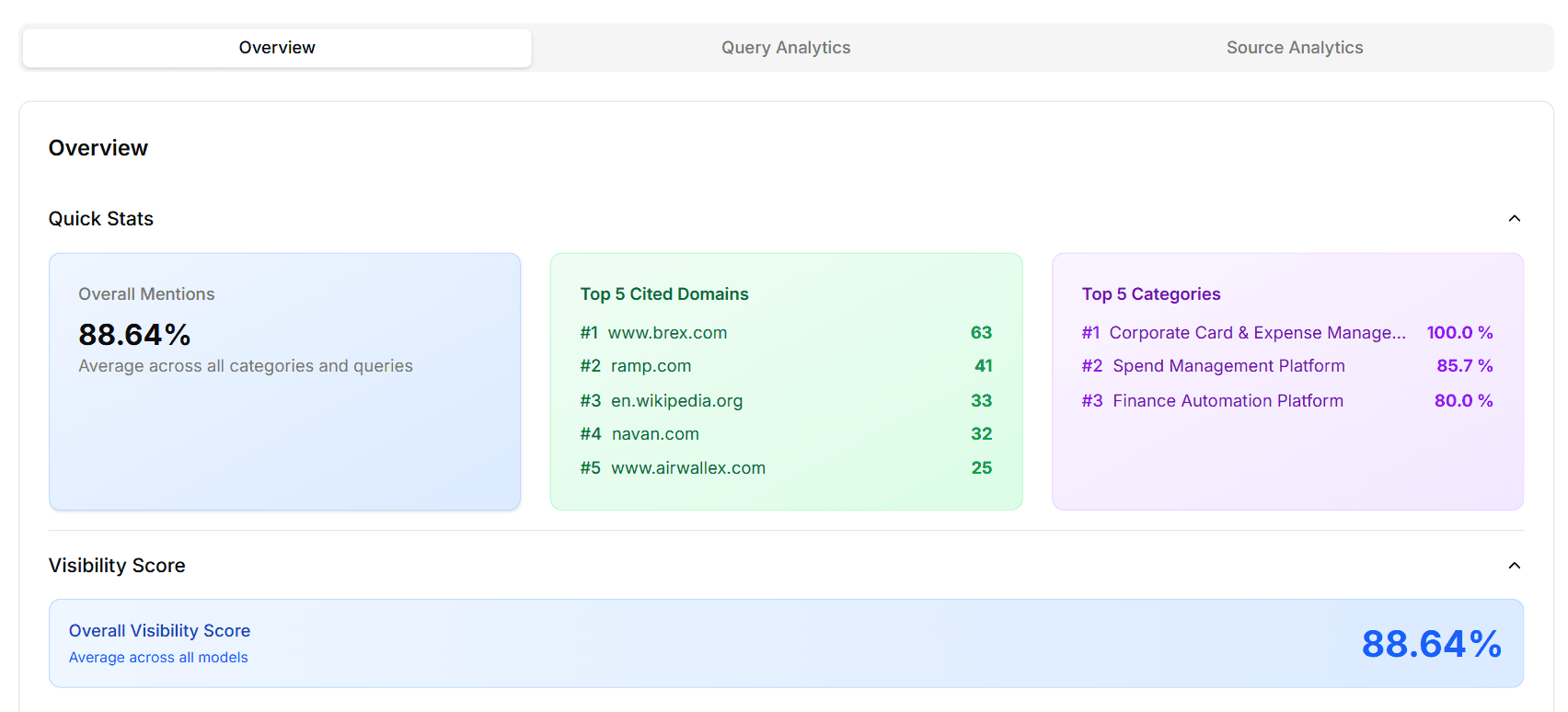

Key Numbers at a Glance

88.6%Overall visibility (39 of 44 queries)

#2.1 Avg brand position when mentioned

39 Total mentions across all models

73.3% Perplexity rate vs 100% on Google AI

Finance leaders researching spend management platforms are no longer starting in Google. They are asking ChatGPT, Perplexity, and Google AI Mode which corporate card and expense solutions to evaluate. The short list an AI assistant returns often determines which vendors enter a formal review — and brands that don't appear in those lists lose pipeline before a sales conversation ever begins.

To measure exactly where Ramp stands in this AI-driven buying journey, XLR8 AI ran a structured GEO (Generative Engine Optimization) benchmark on April 19, 2026 — 44 intent-aligned queries across Google AI Mode, GPT Fast, and Perplexity, covering Ramp's three core positioning categories. This report breaks down every finding: overall visibility, model-level performance, category breakdown, competitive share of voice, and what Ramp should do next.

Why Ramp's AI Visibility Matters in 2026

In 2026, AI assistants are acting as front doors to B2B fintech discovery. When a finance leader asks an AI for the best spend management platform, the LLM's short list often replaces a full comparison search. For Ramp, consistent presence — and strong positioning — in those short lists is now as strategically important as paid demand gen and content marketing.

XLR8 AI's GEO benchmarks help enterprise fintech brands understand where they are already trusted by LLMs and where gaps quietly shift pipeline toward competitors. Ramp's 26.7-point Perplexity gap, specifically in finance automation queries, is exactly the kind of blind spot that costs pipeline without ever appearing in a traditional analytics dashboard.

Experiment Overview: Scope, Queries, and Methodology

XLR8 AI designed this benchmark using its AI visibility tracking platform. Here is exactly how it was run:

Total queries: 44 intent-aligned discovery queries across three categories

Models tested: Google AI Mode (15 queries), GPT Fast / ChatGPT (14 queries), Perplexity (15 queries)

Categories: Corporate Card & Expense Management, Spend Management Platform, Finance Automation Platform

Metrics captured: Brand mention (yes/no), rank position when mentioned, competitor co-occurrences

Company: Ramp (ramp.com)

Date run: April 19, 2026

For each query, XLR8 AI's platform logged whether Ramp was mentioned, its relative rank position, and which competitor brands appeared in the same answer. This structure allows Ramp to rerun the identical benchmark later to measure the impact of any GEO initiatives.

Headline Results: Ramp's 2026 AI Visibility Snapshot

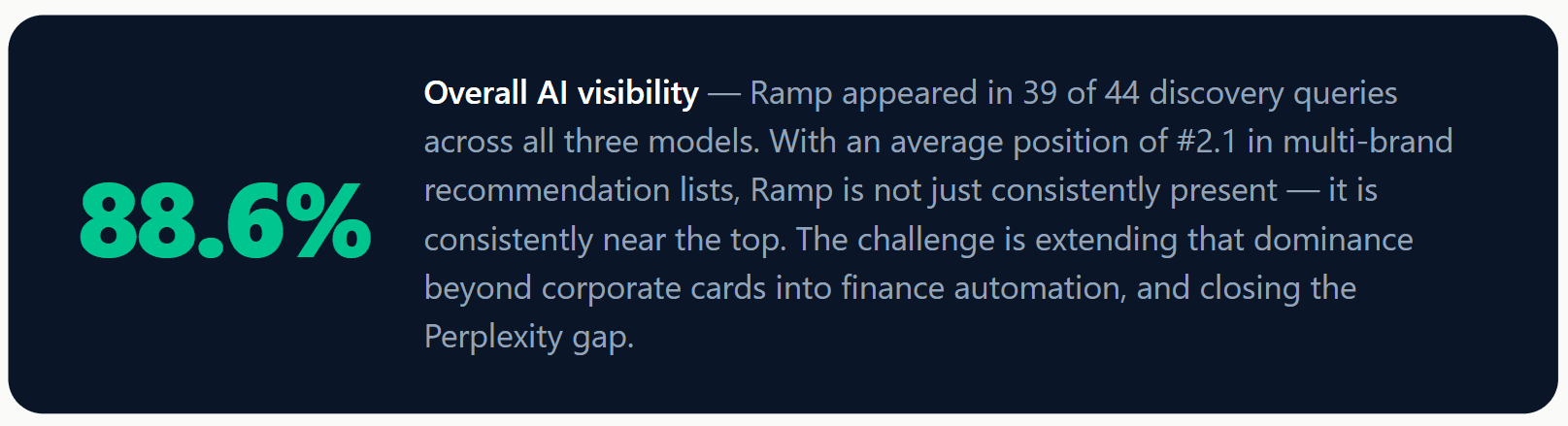

Ramp's 88.6% overall visibility and #2.1 average position are a strong foundation. These numbers confirm that LLMs already treat Ramp as a trusted, top-tier recommendation in spend management and corporate card discovery. However, the model-level and category-level breakdowns reveal two specific gaps where competitors can and do displace Ramp in AI-generated shortlists.

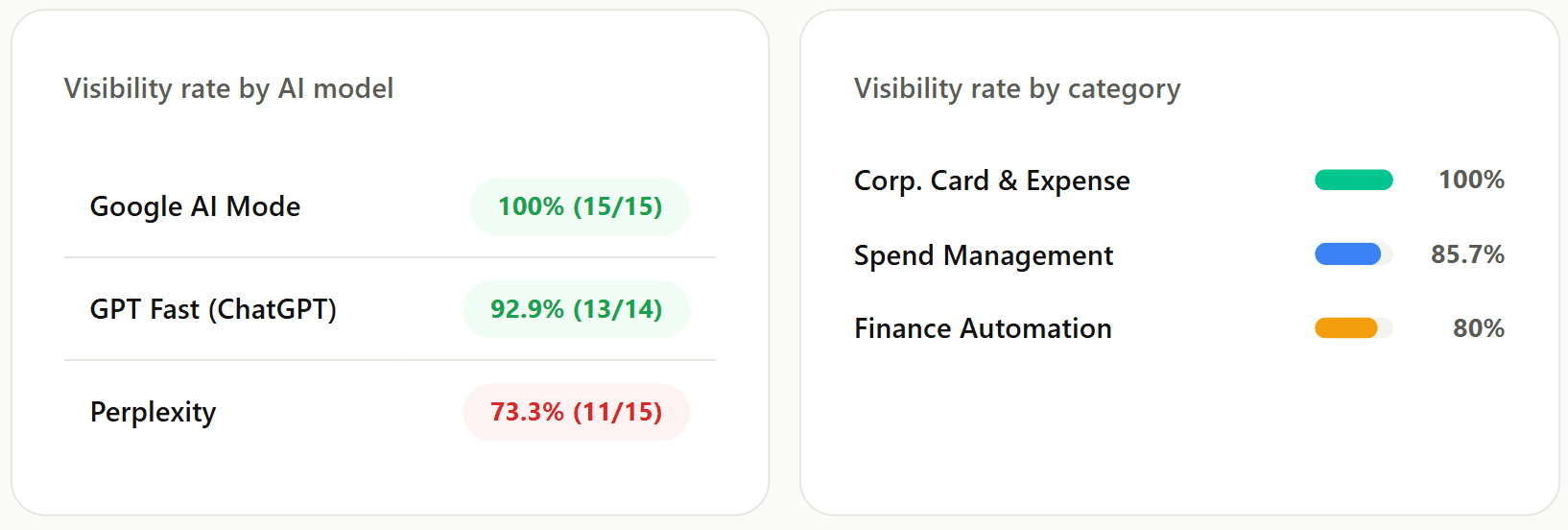

Model-Level Performance: ChatGPT, Perplexity, and Google AI

Google AI Mode: Perfect 100% Visibility

In Google AI Mode, Ramp achieved a perfect 100% visibility rate, appearing in all 15 of 15 queries tested. This confirms that Google's AI layer has a strong, well-established association between Ramp and all three query categories. Ramp's web authority, product pages, and content footprint are clearly aligned with what Google AI retrieves for spend management and corporate card queries. Future GEO work on this model can focus on rank order and narrative framing rather than basic inclusion.

GPT Fast (ChatGPT): Strong at 92.9%

On GPT Fast, Ramp appeared in 13 of 14 queries for a 92.9% visibility rate. (One query did not execute in this run.) ChatGPT reliably surfaces Ramp as a leading spend management and corporate card solution. The single miss and average position of 2.1 across all models suggest that maintaining and strengthening the depth of Ramp-specific content in ChatGPT's training data is the priority here — rather than addressing a structural gap.

Perplexity: The 26.7-Point Gap That Demands Attention

Critical finding: Perplexity only surfaces Ramp 73.3% of the time

Ramp appeared in just 11 of 15 Perplexity queries — a 73.3% visibility rate versus a perfect 100% on Google AI Mode. This 26.7-point gap is the most actionable finding in the entire benchmark. XLR8 AI's logs show that the four missed queries are all enterprise and CFO-intent prompts: "unified spend management platforms combining procurement, AP automation and business banking" and "unified finance automation platforms centralizing procurement." These are exactly the high-value queries where missing from Perplexity's answer quietly shifts shortlist inclusion to Brex, Airbase, and Tipalti.

Category Performance: Where Ramp Wins and Where It Trails

Corporate Card & Expense Management: 100% — Ramp's Anchor

Ramp's strongest category is exactly where the brand originated. A perfect 100% visibility rate across all 15 corporate card and expense management queries confirms that LLMs consistently and confidently identify Ramp as a leader in this space. This category is Ramp's AI visibility anchor — the foundation that all other GEO work should build from, not fix.

Spend Management Platform: 85.7% — Solid, with Room to Grow

In the Spend Management Platform category, Ramp appeared in 12 of 14 queries (85.7%). This is strong, but the slight drop from corporate card performance reflects the fact that spend management queries attract a broader competitive set — Brex, Airbase, Spendesk, and Payhawk all appear with higher frequency here. The two missed queries were concentrated in Perplexity, again pointing to the same source pattern.

Finance Automation Platform: 80% — The Strategic Gap

Finance Automation is Ramp's weakest category — driven by Perplexity misses

Ramp appeared in only 12 of 15 Finance Automation Platform queries (80%). All three misses are on Perplexity, which in this category instead favors Airbase, Tipalti, and BILL. XLR8 AI interprets this as a signal that Perplexity's retrieval patterns for finance automation queries lean toward vendors with dedicated AP automation and payables narratives — and that Ramp's current content footprint does not yet match that retrieval pattern as strongly as its corporate card content does.

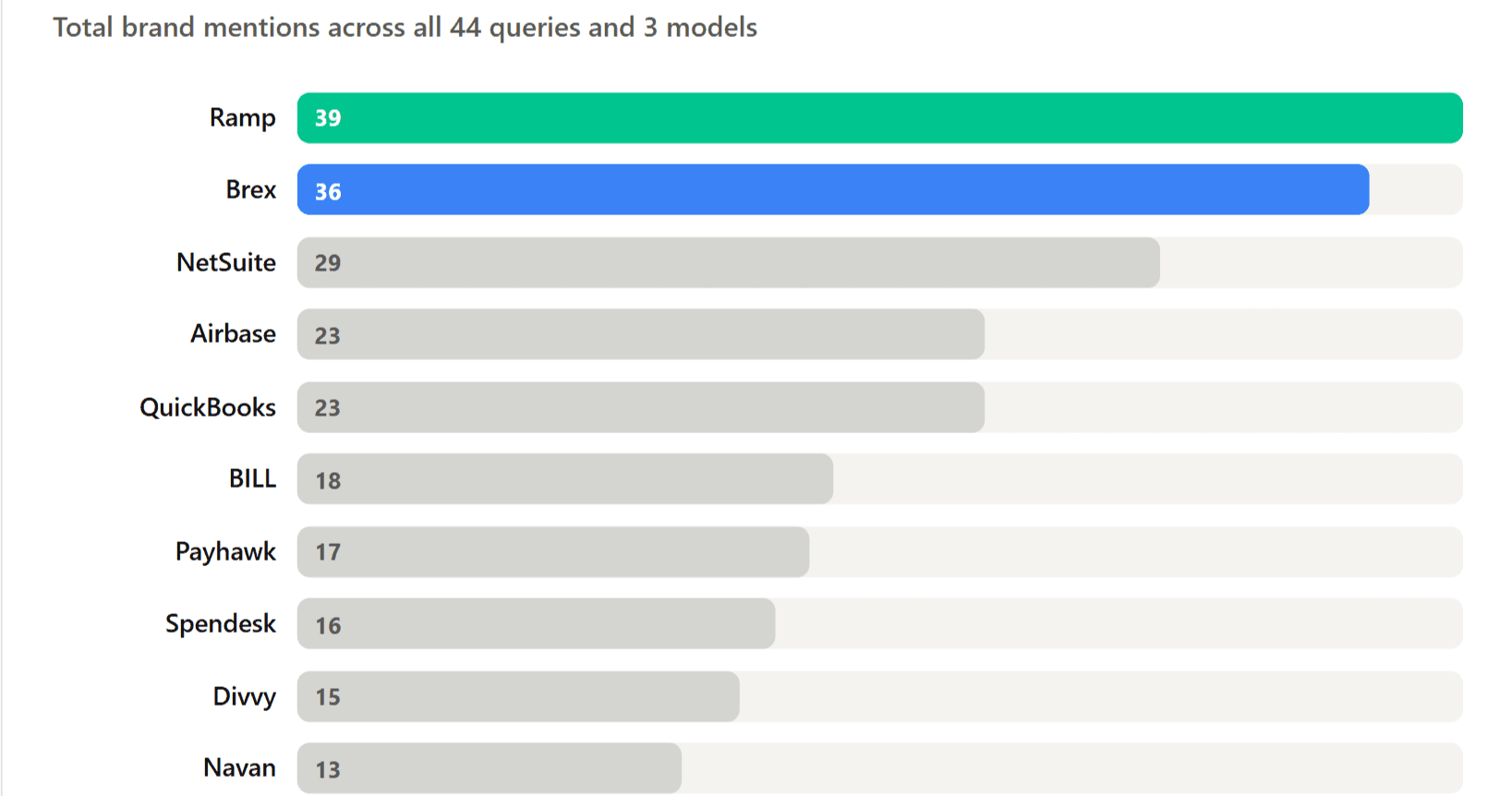

Competitive Landscape: Who Appears Alongside Ramp

XLR8 AI's benchmark tracked not only Ramp's mentions but which competitors appeared in the same AI answers. This co-occurrence data reveals which brands LLMs treat as Ramp's peer set — and where Ramp risks being displaced on specific query types.

Brex as Co-Leader: 36 Mentions to Ramp's 39

Brex's 36 mentions across all 44 queries make it the closest co-leader in AI-generated spend management recommendations. In the majority of responses where Ramp appears, Brex appears in the same list — typically within one or two positions. For Ramp, this co-leadership means that brand differentiation in AI answers is critical: appearing alongside Brex is not a problem, but being framed as interchangeable with it is.

NetSuite and ERP Crossover

NetSuite's 29 mentions are notable because it is not a direct corporate card competitor — it is an ERP platform. Its high appearance rate reflects that some queries, particularly those framing "finance automation platform" or "unified spend management," are being answered with a broader mix of accounting and ERP tools alongside dedicated spend management platforms. This ERP crossover is one reason Ramp can lose ground in Finance Automation category queries where the competitive set expands beyond pure-play spend tools.

Airbase, BILL, and Tipalti: The AP Automation Cluster

Airbase (23), BILL (18), and Tipalti (13) are Ramp's most significant rivals in Finance Automation queries specifically. These three brands have built strong content and third-party authority around AP automation, payables, and procurement workflows — the exact areas where Perplexity currently omits Ramp. Improving Ramp's visibility against these three tools in Perplexity is the single highest-priority competitive GEO action this benchmark reveals.

5 Key Findings from the Ramp GEO Benchmark

Corporate Card dominance is real — and must be the anchor for all GEO work

100% visibility in Corporate Card & Expense Management across all three models is a strong strategic asset. LLMs consistently and confidently route corporate card and expense queries to Ramp. Future GEO work should reinforce this foundation — fresh case studies, updated documentation, and third-party citations — so that as models update, Ramp retains this default status.

The Perplexity gap (73.3%) is an enterprise pipeline risk

The 26.7-point gap between Perplexity (73.3%) and Google AI Mode (100%) is concentrated in enterprise and CFO-intent queries about unified spend management and finance automation. These are the exact prompts that high-value, enterprise-intent buyers use. Missing from Perplexity's answer on these queries means Ramp is absent from a significant slice of early-stage enterprise evaluation.

Brex is a co-leader with 36 mentions — differentiation in AI answers is critical

With Brex appearing in 36 of 44 queries — just 3 behind Ramp — LLMs currently treat these two brands as near-interchangeable in most spend management and corporate card answers. For Ramp, the GEO priority is not just to appear, but to shape how AI systems frame Ramp relative to Brex: specific strengths, distinct use cases, and differentiated outcomes that LLMs can surface in their reasoning.

Average position of #2.1 is an exceptional strength to protect

An average position of 2.1 when mentioned means Ramp is almost always listed first or second in AI recommendation lists. This is a significant defensive asset. The GEO strategy should be to extend this strong ranking into Finance Automation and Perplexity — not to fix rank where it is already near-optimal, but to ensure the same quality of positioning appears in the weaker categories and models.

Finance Automation weakness is concentrated in Perplexity's AP-intent queries

All three Finance Automation category misses are on Perplexity, and all involve prompts about unified procurement, AP automation, and centralized finance operations. Perplexity favors Airbase, Tipalti, and BILL on these queries — vendors that have built dedicated AP automation narratives. Ramp's content and third-party signal investments in this area are not yet matching the retrieval patterns Perplexity uses for these intents.

5 GEO Strategies Ramp Should Execute

Build dedicated Finance Automation content aligned to AP and procurement intent

Perplexity misses Ramp on unified spend management and AP automation queries because it does not find strong, structured Ramp content for those intents. Create dedicated solution pages and long-form guides at ramp.com covering accounts payable automation, procurement workflows, and centralized finance operations — with clear headings, FAQs, and consistent entity naming. Mirror the exact language Perplexity queries use: "unified finance automation," "AP automation platform," "procurement and spend management."

Build third-party signals specifically for Perplexity's source ecosystem

Perplexity relies heavily on real-time external sources. The benchmark shows Ramp's ramp.com is cited 41 times, but brex.com is cited 63 times in the same experiment — Brex has built a stronger external citation footprint. Identify which publications and domains Perplexity cites for finance automation queries and execute a targeted PR and content partnership plan to earn Ramp mentions in those exact sources. Analyst coverage, CFO-oriented editorial sites, and structured comparison posts are the formats Perplexity trusts.

Differentiate against Brex with explicit, AI-parseable positioning

Brex's co-leadership (36 mentions) means Ramp needs to give LLMs specific, structured reasons to prefer Ramp for different use cases. Publish clear comparison content (Ramp vs Brex, Ramp vs Airbase), solution-specific pages that emphasize Ramp's automation depth, and customer outcome stories with concrete metrics. When AI systems encounter these assets, they surface differentiated framing rather than grouping Ramp and Brex as equivalent options.

Protect and deepen Google AI Mode and ChatGPT performance

Perfect visibility on Google AI Mode and 92.9% on ChatGPT are assets that require active maintenance, not just appreciation. As models update, rankings can shift. Keep product pages, documentation, and schema markup (SoftwareApplication, FAQPage) current. Add quarterly content refreshes to the top-performing corporate card and spend management pages so Google AI and ChatGPT continue to treat Ramp as the authoritative source in these categories.

Track competitive movements monthly with a recurring GEO experiment

Brex, Airbase, and Tipalti are all actively investing in content and GEO. A benchmark is a point-in-time snapshot — Ramp needs a recurring measurement cadence to detect when a competitor is gaining share of voice in a specific model or category before it compounds into a pipeline problem. XLR8 AI recommends rerunning this exact 44-query experiment on a monthly basis and using the data to drive GEO sprint priorities.

Key takeaways from this benchmark

Ramp achieves 88.6% overall AI visibility — strong, but not yet category dominance in finance automation

Average position of #2.1 is excellent — Ramp ranks near the top when it appears

Google AI Mode: 100%. ChatGPT: 92.9%. Perplexity: 73.3% — the 26.7-point gap is the priority

Corporate Card & Expense Management: 100% — Ramp's strongest and most-trusted category

Finance Automation: 80% — all misses on Perplexity, all AP/procurement-intent queries

Brex is the closest rival at 36 mentions — near-interchangeable positioning in most answers

Airbase, Tipalti, and BILL are displacing Ramp specifically on Perplexity's AP automation queries

What B2B Fintech Teams Get From AI Visibility Benchmarking

This Ramp benchmark is representative of the GEO analysis every enterprise fintech brand should be running in 2026. Here is what a structured, recurring GEO program delivers:

Quantified AI share of voice

Turn AI visibility from anecdotal screenshots into trackable metrics — visibility %, average position, competitor mentions — that sit alongside traditional demand gen KPIs.

Early warning on competitive shifts

LLM outputs reveal competitive movements before traditional analytics do. XLR8 AI tracking gives time to respond before a Brex or Airbase gain compounds into pipeline loss.

Content investment prioritization

Instead of guessing which topics will influence AI visibility, use experiment data to direct content effort toward the specific categories and query intents that actually move the score.

Category narrative alignment

Seeing how ChatGPT and Perplexity describe Ramp today reveals which product strengths LLMs emphasize — and which adjacent categories (like AP automation) need stronger signal investment.

Frequently Asked Questions

What is an AI visibility benchmark for a brand like Ramp?

An AI visibility benchmark is a structured study of how often and how prominently a brand appears in AI-generated answers for specific discovery queries. For Ramp, XLR8 AI ran 44 spend management, corporate card, and finance automation queries across ChatGPT, Perplexity, and Google AI Mode on April 19, 2026, logging brand mentions, rank position, and competitor co-occurrences to produce a quantified AI visibility baseline.

Why do B2B fintech teams need GEO tracking for spend management and corporate cards?

Finance leaders increasingly rely on AI assistants to research and shortlist vendors. For spend management and corporate card brands, missing from AI recommendations means missing from early evaluation cycles. XLR8 AI's Ramp benchmark shows that even a well-recognized brand like Ramp has model-specific gaps — particularly on Perplexity — where competitors can quietly displace it in high-value enterprise queries.

How can Ramp improve its visibility in finance automation and Perplexity queries?

Ramp can improve its Finance Automation and Perplexity visibility by creating dedicated content around AP automation, procurement workflows, and unified finance operations — using the exact language Perplexity queries employ. This should be supported by third-party signals (analyst coverage, editorial placements, comparison content) on the domains Perplexity cites most often for CFO-intent queries. XLR8 AI's platform can identify which specific sources and content types will move the needle fastest.

How does Ramp compare to Brex in AI-generated recommendations?

Ramp leads Brex by a narrow margin in this benchmark — 39 mentions to 36 across 44 queries — but the two brands appear together in most responses. LLMs currently treat Ramp and Brex as near-interchangeable co-leaders in spend management and corporate card queries. For Ramp, the GEO priority is to create clearer differentiation signals in content and third-party coverage so AI systems can articulate specific reasons to prefer Ramp for defined use cases.

How did XLR8 AI run this benchmark?

XLR8 AI defined 44 intent-aligned discovery queries across three categories — Corporate Card & Expense Management, Spend Management Platform, and Finance Automation Platform — and executed them across Google AI Mode, GPT Fast, and Perplexity on April 19, 2026. For each answer, XLR8 AI's platform logged whether Ramp was mentioned, its rank position, and which competitors appeared. The structured methodology allows the same benchmark to be rerun on a regular cadence to measure GEO progress.

Run this benchmark for your brand

XLR8 AI runs AI visibility benchmarks for B2B fintech, SaaS, and enterprise brands — tracking mentions, competitive share of voice, and model-level performance across ChatGPT, Perplexity, and Google AI. Get your baseline report and GEO roadmap.

Get your AI Visibility Benchmark →