Retool AI Visibility Benchmark 2026: How Retool Appears Across ChatGPT, Perplexity & Claude

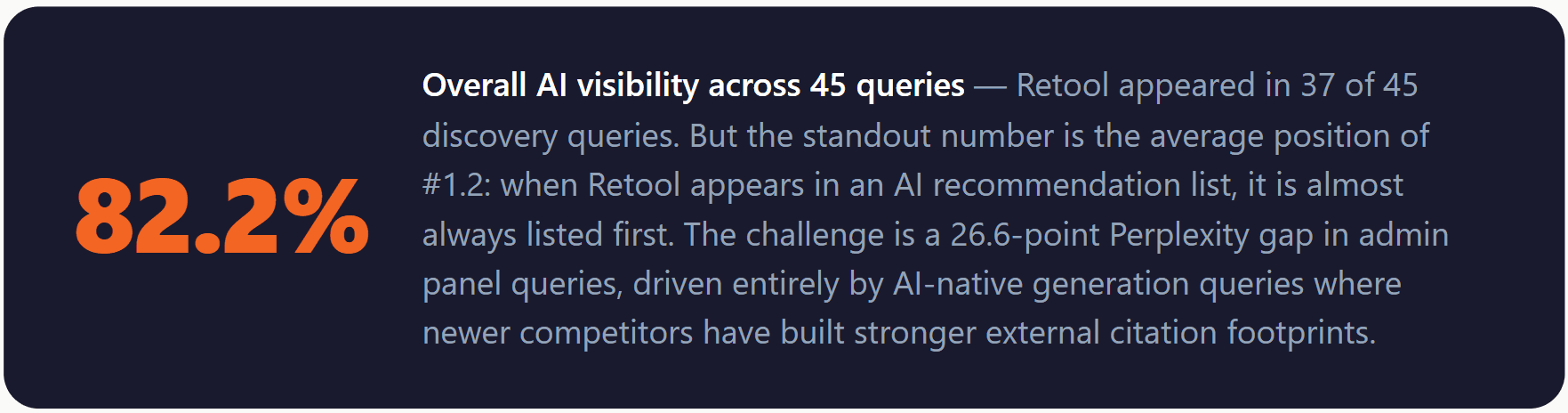

We ran 45 structured queries across three major AI models to measure how often Retool gets recommended when developers and engineering teams ask about internal tools builders, low-code platforms, and admin panel builders. Exceptional average position — and one concentrated gap that competitors are actively building into.

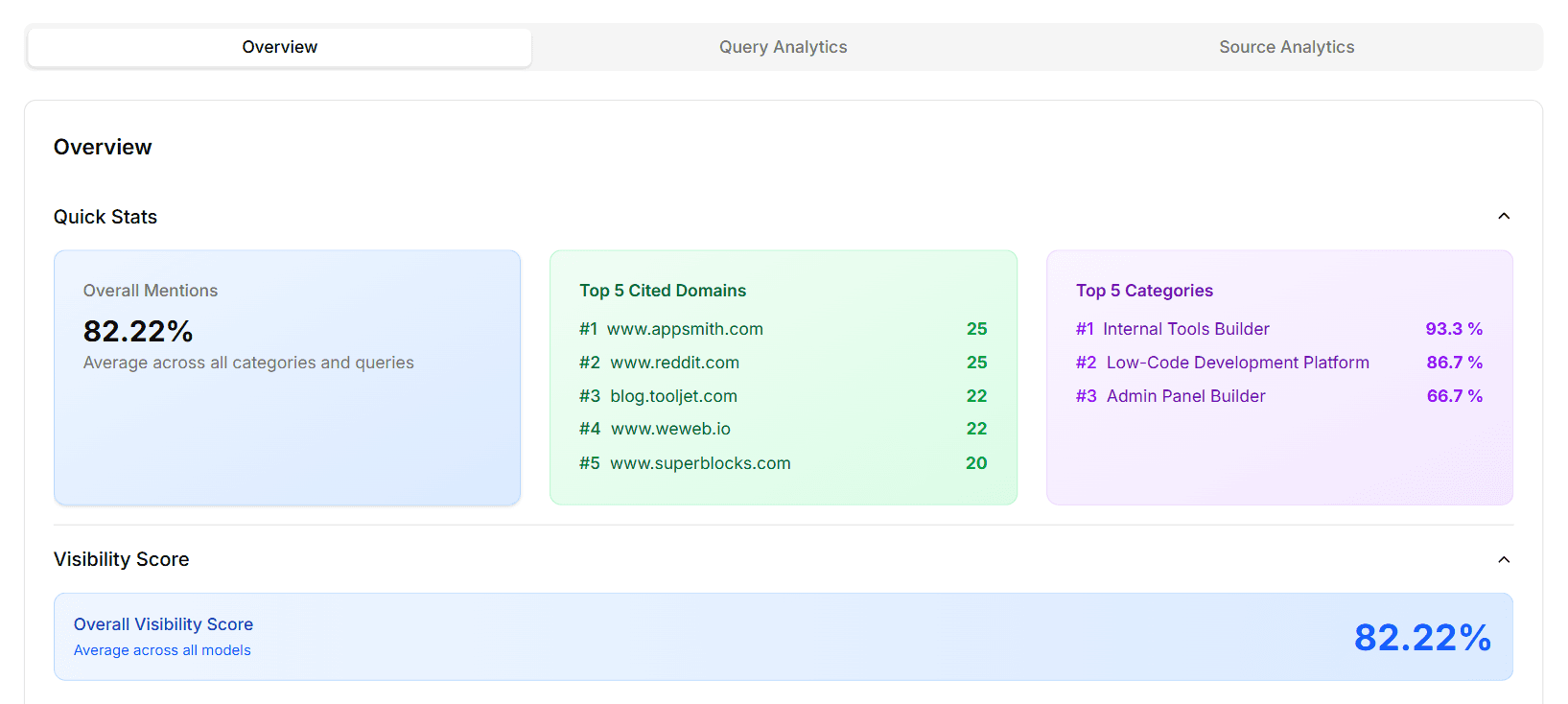

Key Numbers at a Glance

82.2% Overall visibility - 37 of 45 queries

#1.2 Avg brand position when mentioned

37 Total mentions across all models

66.7%Perplexity rate vs 93.3% on ChatGPT

Engineering teams and developers researching internal tools platforms no longer start with Google alone. They ask ChatGPT, Perplexity, and Claude which low-code builders to evaluate — and the short list those models return often determines which tools enter a serious proof-of-concept. For Retool, consistent presence in those lists is as strategically important as any organic search ranking.

To quantify exactly where Retool stands in this AI-driven evaluation journey, XLR8 AI ran a structured GEO (Generative Engine Optimization) benchmark on April 21, 2026 — 45 intent-aligned queries across GPT Fast (ChatGPT), Perplexity, and Claude, covering Retool's three core positioning categories. This report breaks down every finding.

Experiment Overview: Scope, Queries, and Methodology

XLR8 AI designed this benchmark using its AI visibility tracking platform. Here is exactly how it was run:

Total queries: 45 intent-aligned discovery queries across three categories

Models tested: GPT Fast / ChatGPT (15 queries), Perplexity (15 queries), Claude (15 queries)

Categories: Admin Panel Builder, Internal Tools Builder, Low-Code Development Platform

Metrics captured: Brand mention (yes/no), rank position when mentioned, competitor co-occurrences

Company: Retool (retool.com)

Date run: April 21, 2026

For each query, XLR8 AI's platform logged whether Retool was mentioned, its rank when it appeared, and which competitor brands appeared in the same answer. This methodology allows Retool to rerun the same benchmark later and measure the impact of any GEO initiatives.

Headline Results: Retool's 2026 AI Visibility Snapshot

The #1.2 average position is exceptional. Among all brands XLR8 AI has benchmarked, a sub-1.5 average position means Retool is not just included in AI recommendation lists — it is almost always the brand listed first. The strategic priority is therefore not improving inclusion, but closing the specific Perplexity admin panel gap before Appsmith and Superblocks consolidate those positions.

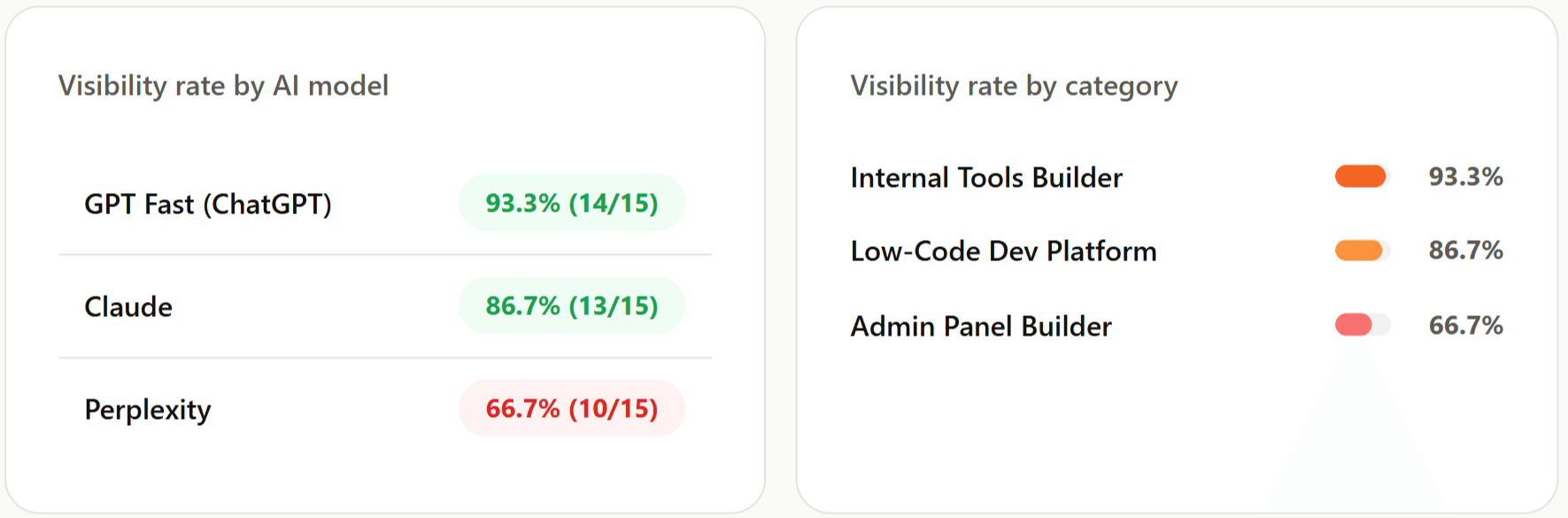

Model-Level Performance: ChatGPT, Perplexity and Claude

GPT Fast (ChatGPT): 93.3% — Retool's Strongest Model

ChatGPT mentioned Retool in 14 of 15 queries (93.3%). The single miss was the "AI-assisted admin panel builders that can auto-generate internal apps from data models and prompts" query — signalling that ChatGPT occasionally routes AI-native admin generation queries away from Retool. For GEO strategy on ChatGPT, the priority is reinforcing Retool's AI capabilities narrative and improving rank order, not fixing basic inclusion.

Claude: 86.7% — Reliable with a Specific AI-Native Blind Spot

Claude mentioned Retool in 13 of 15 queries (86.7%). Both misses follow the exact same pattern as ChatGPT's single miss: queries framing internal tool builders as "AI-native" or "prompt-based app generation." Claude associates Retool strongly with drag-and-drop internal tools and SQL-connected admin panels, but is less confident routing AI-native app generation queries to Retool. This is a content and positioning gap, not a brand awareness gap.

Perplexity: 66.7% — The 26.6-Point Gap That Demands Attention

Critical finding: Perplexity only surfaces Retool 66.7% of the time

Perplexity mentioned Retool in only 10 of 15 queries — a 66.7% rate versus 93.3% on ChatGPT. This 26.6-point gap is the most actionable finding in the benchmark. All 5 Perplexity misses cluster in two query types: AI-assisted admin panel builders that auto-generate apps from prompts or data models, and enterprise-ready low-code platforms for engineering teams. On both query types, Perplexity instead surfaces Appsmith, Tooljet, UI Bakery, and Superblocks. Perplexity's citation data from this experiment shows appsmith.com (25 cites) and blog.tooljet.com (22 cites) far outpacing retool.com (17 cites) — competitors have built stronger external citation footprints in the sources Perplexity trusts most.

Category Performance: Where Retool Wins and Where It Trails

Internal Tools Builder: 93.3% — Retool's Anchor Category

Strength: Retool dominates Internal Tools Builder across all three models

Retool appeared in 14 of 15 Internal Tools Builder queries (93.3%) — its strongest category and clearest LLM identity. When engineering teams ask AI assistants for internal tools builders, Retool is almost always part of the answer. This category dominance is the GEO foundation all future optimization must build from and extend — not replace. Future GEO work here should focus on rank order and narrative differentiation against Appsmith, not basic inclusion.

Low-Code Development Platform: 86.7% — Solid, With One Perplexity Gap

In Low-Code Development Platform queries, Retool appeared in 13 of 15 queries (86.7%). The single miss was a Perplexity query framing the category as developer-friendly drag-and-drop combined with custom code — a positioning Retool directly owns, but which Perplexity's retrieval didn't surface it for. This is a Perplexity-specific content alignment gap rather than a broader visibility problem.

Admin Panel Builder: 66.7% — The Most Urgent Category Gap

Admin Panel Builder is Retool's weakest category — all misses on Perplexity, all on AI-native queries

Admin Panel Builder is Retool's weakest category at 66.7% (10/15 queries). All 5 misses are on Perplexity, and all 5 share the same pattern: queries emphasising AI-assisted or auto-generate capabilities. Perplexity routes these queries to Appsmith, UI Bakery, and Superblocks — tools that have published more dedicated content and earned more external citations around AI-powered admin panel generation. This is a content and citation gap, not a product gap: Retool's AI capabilities exist, but they are not yet mapped by Perplexity's retrieval system to these specific query intents.

One query produced misses on all three models

The query "I need recommendations for AI-assisted admin panel builders that can auto-generate internal apps from data models and prompts" was missed by GPT Fast, Claude, and Perplexity alike. This is the only query in the benchmark where all three models failed to surface Retool — making it the single highest-priority content gap to close. AI-native admin panel generation is the category's next battleground, and Retool is not yet winning it in LLM answers.

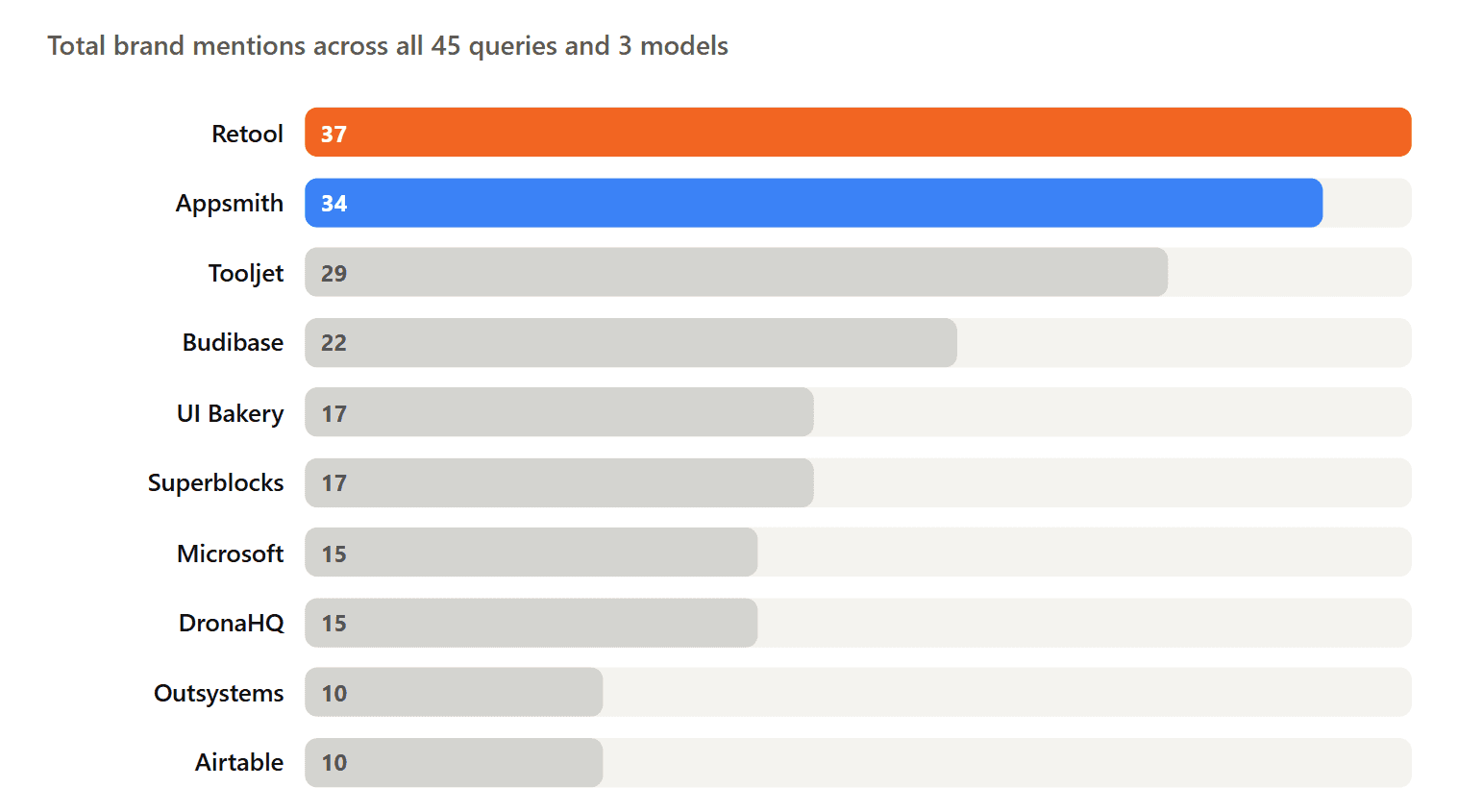

Competitive Landscape: Who Appears Alongside Retool in AI Answers

XLR8 AI's benchmark tracked not only Retool's mentions but which competitors appeared in the same AI answers. This co-occurrence data reveals which brands LLMs treat as Retool's peer set — and where Retool risks being displaced.

Appsmith: The Closest Rival at 34 Mentions

Appsmith's 34 mentions — just 3 behind Retool's 37 — make it the single most important competitive signal in this benchmark. More concerning than the mention gap is the citation gap: appsmith.com is the most-cited domain in this experiment with 25 citations, beating retool.com's 17. When Perplexity misses Retool, Appsmith is the most common replacement. Closing the Appsmith citation gap is Retool's primary GEO competitive objective.

Tooljet and Budibase: The Open-Source Displacement Risk

Tooljet (29 mentions) and Budibase (22 mentions) are both open-source alternatives that appear frequently alongside Retool. blog.tooljet.com appears 22 times in this experiment's citation data — reflecting active community content strategies that drive LLM citations. These brands generate high volumes of external citations that carry significant weight in Perplexity's retrieval model, making them a structural challenge even when Retool's product is stronger.

UI Bakery and Superblocks: The AI-Native Challengers

UI Bakery (17 mentions) and Superblocks (17 mentions) are the most important competitive signals in the Admin Panel Builder category. Both platforms have positioned themselves specifically around AI-assisted admin panel generation — the exact query type where Retool is missing from Perplexity answers. superblocks.com appears 20 times in this experiment's citation data. These tools are building AI-native narratives in content and PR that Perplexity's retrieval system is picking up for the queries Retool currently misses.

5 Key Findings from the Retool GEO Benchmark

FINDING 01

#1.2 average position is exceptional — and must be actively protected

An average position of 1.2 when mentioned means Retool is almost always the first brand AI models list. This is among the strongest position scores in XLR8 AI's benchmark database. The strategic priority is protecting this first-position advantage as Appsmith (34 mentions, 25 domain citations) and Tooljet actively accumulate the citations that could gradually displace Retool from that top slot.

FINDING 02

The Admin Panel Builder gap is entirely a Perplexity + AI-native problem

All 5 Admin Panel Builder misses are on Perplexity, all share the same query pattern: AI-assisted admin panel builders that auto-generate from prompts or data models. Perplexity knows what Retool is — this is not a brand awareness gap. It is a content alignment gap: Retool's current external content footprint does not match how Perplexity retrieves sources for AI-native admin generation queries.

FINDING 03

Appsmith out-cites Retool in external domain citations despite fewer total mentions

Appsmith's 34 mentions vs Retool's 37 is a 3-mention gap. But appsmith.com (25 cites) out-cites retool.com (17 cites) in this experiment's citation data — meaning Perplexity finds Appsmith content more frequently than Retool content in external retrieval. If Appsmith continues this citation accumulation, it could close the 3-mention gap within one model update cycle.

FINDING 04

Internal Tools Builder dominance (93.3%) is the GEO anchor to extend from

Retool's 93.3% in Internal Tools Builder confirms that its primary category identity is secure and well-established across all three models. This is the category where Retool should deepen topical authority to create a competitive moat — making it harder for Appsmith or Tooljet to displace Retool as the default first recommendation when the category becomes more contested.

FINDING 05

One query type is missed on all three models — AI-native admin generation

The "AI-assisted admin panel builders that auto-generate internal apps from data models and prompts" query produced misses on GPT Fast, Claude, and Perplexity alike — the only query in the benchmark with three model misses. This cross-model pattern signals that LLMs broadly lack sufficient structured evidence connecting Retool to AI-native admin panel generation. It is the most urgent content investment the benchmark identifies.

5 GEO Strategies Retool Should Execute Based on These Results

Build AI-native admin panel content targeted at Perplexity's source ecosystem

All 5 Perplexity misses are on AI-assisted admin panel generation queries. Perplexity retrieves from appsmith.com, blog.tooljet.com, and superblocks.com for these intents — not retool.com. Retool needs dedicated, structured content explicitly covering AI-powered admin panel generation: prompt-based app creation, auto-generate from data models, AI-assisted CRUD interface building. This content must appear on the third-party sources Perplexity trusts, not just on retool.com.

Close the external citation gap with Appsmith by targeting Perplexity's preferred domains

appsmith.com (25 cites) out-cites retool.com (17 cites) in this benchmark. Retool needs a targeted PR and content partnership strategy to increase its citation count on the external domains Perplexity retrieves from for low-code and admin panel queries. Specific targets from this experiment's data: reddit.com (25 cites), weweb.io (22 cites), superblocks.com (20 cites) peer-set publications, and developer community blogs like thectoclub.com (5 cites).

Publish AI-native positioning content to close the cross-model prompt-generation gap

The query that produced misses across all three models is specifically about AI-assisted admin panel builders that auto-generate from data models and prompts. Retool should publish dedicated content around its AI capabilities: how Retool's AI features generate internal apps, how prompt-based app creation works in Retool, and comparison content positioning Retool's AI features against Superblocks and UI Bakery. Explicit, retrievable evidence of AI-native capabilities is what shifts LLM routing for these query types.

Deepen Internal Tools Builder content to protect and widen the category moat

93.3% in Internal Tools Builder is the benchmark's anchor strength — but Appsmith is only 3 mentions behind overall. Retool should increase the volume and authority of Internal Tools Builder content: more comparison guides (Retool vs Appsmith, Retool vs Tooljet), more use-case-specific content (internal tools for ops teams, admin panels for customer support, CRUD tools for engineering), and more structured FAQ content with schema markup that LLMs can parse directly.

Track competitive movements monthly — Appsmith and Superblocks are moving fast

With Appsmith 3 mentions behind and out-citing Retool.com in external citations, and Superblocks building AI-native content specifically in the gap Retool doesn't cover, Retool's lead is not permanent. XLR8 AI recommends rerunning this exact 45-query experiment monthly so the team can detect when a competitor is gaining share of voice in a specific model or category before it compounds into a pipeline problem.

Key takeaways from this benchmark

82.2% overall visibility — strong baseline with clear, fixable gaps

#1.2 average position — Retool leads almost every recommendation list it appears in

GPT Fast: 93.3% · Claude: 86.7% · Perplexity: 66.7% — the 26.6-point Perplexity gap is the priority

Internal Tools Builder: 93.3% — Retool's anchor category and clearest LLM identity

Admin Panel Builder: 66.7% — all misses on Perplexity, all on AI-native generation queries

Appsmith is 3 mentions behind Retool and out-cites retool.com in external domain citations (25 vs 17)

Superblocks and UI Bakery are building AI-native admin panel narratives that displace Retool on Perplexity's AI-generation queries

One query type missed on all three models: "AI-assisted admin panel builders that auto-generate from data models and prompts"

What Developer Tools Brands Get From AI Visibility Benchmarking

Retool's benchmark illustrates a challenge common to established developer tools brands in 2026: strong legacy recognition that is being eroded at the edges by AI-native challengers. A structured GEO program delivers:

Early detection of citation erosion

appsmith.com now out-cites retool.com in external domain citations. Without a GEO benchmark, this shift would be invisible — it doesn't show up in traffic analytics or CRM data until it's already compounded.

Precise identification of content gaps

One specific query type is missing across all three models. GEO experiments pinpoint this with a precision that no other measurement framework provides.

Competitive share of voice tracking

Knowing Appsmith is 3 mentions behind and gaining citation authority is an early warning signal. Monthly tracking makes this visible before it becomes a shortlist problem.

Model-specific GEO strategy

Perplexity requires different content and citation strategies than ChatGPT. A cross-model benchmark shows where each gap lives so investment is targeted, not generic.

Frequently Asked Questions

What is an AI visibility benchmark for a brand like Retool?

An AI visibility benchmark is a structured study that measures how often and how prominently a brand appears in AI-generated answers for specific discovery queries. For Retool, XLR8 AI ran 45 queries across Admin Panel Builder, Internal Tools Builder, and Low-Code Development Platform categories on GPT Fast, Perplexity, and Claude on April 21, 2026, logging brand mentions, rank positions, and competitor co-occurrences to produce a verified visibility baseline.

Why does Retool underperform on Perplexity specifically?

Retool's 66.7% Perplexity rate versus 93.3% on ChatGPT reflects a gap between how Retool's content is distributed across the web and what Perplexity's retrieval pipeline favours. Perplexity weights real-time, external third-party sources heavily. Competitors like Appsmith (appsmith.com: 25 cites) and Tooljet (blog.tooljet.com: 22 cites) out-cite retool.com (17 cites) in the sources Perplexity retrieves from for admin panel and AI-native queries. Closing this gap requires earning more coverage on those external domains.

Why does the AI-native admin panel query produce misses across all three models?

The query "AI-assisted admin panel builders that can auto-generate internal apps from data models and prompts" produced misses on GPT Fast, Claude, and Perplexity alike. This pattern suggests that Retool's AI capabilities are not yet clearly mapped by any of the three LLMs to admin panel auto-generation specifically. Tools like Superblocks and UI Bakery have published dedicated content and earned external citations around this capability, giving LLMs clearer retrieval signals. Retool's solution is to publish explicit, structured content covering its AI-powered admin generation features across both its own site and high-authority external domains.

How can Retool improve its Admin Panel Builder visibility?

Retool should prioritise three actions: (1) publish structured content explicitly covering AI-assisted admin panel generation and prompt-based app creation, (2) earn coverage on the third-party domains Perplexity cites most for this category (starting with developer community blogs and integration publications), and (3) add FAQPage schema markup to key admin panel pages so Google AI Mode and ChatGPT can parse Retool's admin capabilities directly. XLR8 AI recommends rerunning this benchmark 60 days after these initiatives to measure visibility movement.

How did XLR8 AI run this benchmark?

XLR8 AI defined 45 intent-aligned discovery queries across three categories and executed them across GPT Fast (ChatGPT), Perplexity, and Claude on April 21, 2026. For each answer, the platform logged whether Retool was mentioned, its rank position, and which competitors appeared. All numbers in this report were verified directly against raw database records before publication. Results are a point-in-time snapshot; LLM outputs change as models update.

Run this benchmark for your brand

XLR8 AI runs AI visibility benchmarks for developer tools, B2B SaaS, and enterprise brands — tracking mentions, competitive share of voice, and model-level performance across ChatGPT, Perplexity, Gemini, Claude, Google AI Mode, Grok, and Copilot. Get your baseline report and GEO roadmap.

Get your AI Visibility Benchmark →

Methodology note: This benchmark was conducted by XLR8 AI on April 21, 2026. 45 queries were run across three AI models: GPT Fast / ChatGPT (15 queries), Perplexity (15 queries), and Claude (15 queries). Brand mentions were logged by XLR8 AI's automated visibility tracking platform. Average position reflects the mean rank when Retool appeared in a multi-brand recommendation list. Competitor mention counts reflect co-occurrences in the same responses. All numbers were verified against raw database records before publication. Results are a point-in-time snapshot; LLM outputs change as models update. XLR8 AI (tryxlr8.ai) is a GEO tracking and optimization platform. This benchmark is published for educational and research purposes.