Google has introduced a crucial update to its web understanding strategy. The tech giant now mandates that websites label AI-generated content using a new digitalSourceType property to its structured data guidelines for DiscussionForumPosting and QAPage schema types. This development marks a significant shift in Google's approach to distinguishing between human-written and AI-generated content.

What Changed

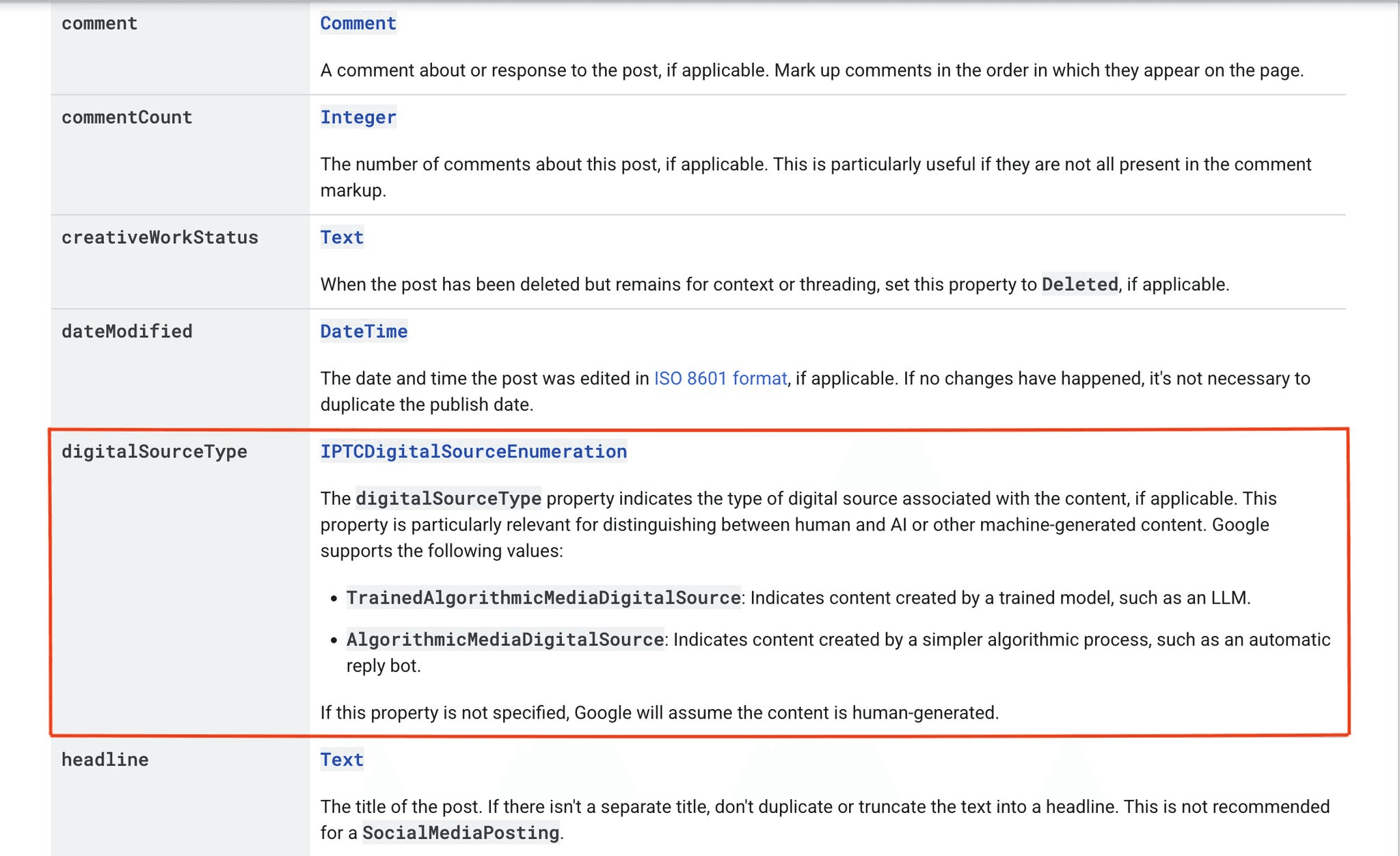

Google's updated structured data documentation introduces a digitalSourceType field with three meaningful states:

TrainedAlgorithmicMedia — For content created by advanced language models like ChatGPT, Claude, or Gemini.

AlgorithmicMedia — For simpler automated content, such as auto-replies or rule-based bots.

Leave it blank — For human-authored content, no declaration is needed as Google assumes human authorship by default.

Right now, this applies specifically to DiscussionForumPosting and QAPage structured data — the schema types used by forums, community platforms, and Q&A sites.

Why Google Started Here

The choice of forums and Q&A pages is deliberate.

These are exactly the content surfaces that LLMs love to scrape. Reddit threads, Stack Overflow answers, Quora responses, product review discussions — this is the raw human experience data that AI models trained on and that Google has historically ranked highly for long-tail and conversational queries.

As AI-generated content floods these spaces (often indistinguishably), Google loses its signal quality. A forum thread that looks like authentic user discussion but was seeded by a GPT wrapper erodes the trust model that made UGC valuable in the first place.

By asking sites to self-declare, Google is essentially building a content provenance layer into the web — starting with the parts most at risk of AI dilution.

What Google Will Actually Do With This Data

Here's the honest answer: we don't know yet.

Google hasn't announced any ranking changes tied to digitalSourceType. But the reason to pay attention is simple — they wouldn't build the infrastructure if they didn't plan to use it.

A few plausible paths:

Human-authored content gets a trust signal boost — especially in UGC-heavy verticals where authenticity drives engagement and credibility.

AI-labeled content gets filtered or downweighted for certain query types, particularly informational and experiential queries where real human perspective matters.

The data feeds Gemini training pipelines — Google distinguishing synthetic from organic content is extremely useful when deciding what to learn from versus what to filter out.

Any of these outcomes, alone or combined, has meaningful implications for how content is ranked in both traditional search and AI-powered search surfaces like AI Overviews.

This is exactly why monitoring how AI search actually perceives your content — not just how you've labeled it — matters. Tools like XLR8 AI show you how your brand and content surfaces across ChatGPT, Gemini, and AI Overviews in real time. As Google starts weighting content provenance signals, the gap between what you publish and how AI search interprets it will widen. Visibility into that gap is where GEO strategy lives.

What You Should Do Right Now

If you run a forum, Q&A platform, community site, or any UGC-heavy property:

Implement digitalSourceType labeling now. Not because Google will penalize you immediately if you don't, but because being ahead of a transparency signal is always better than scrambling to comply after it becomes a ranking factor.

The implementation is straightforward — it's a structured data property addition to your existing schema. If you're already using DiscussionForumPosting or QAPage markup, it's a one-line addition per content type.

More importantly: if your platform mixes human and AI-generated responses (which many now do), having a clear labeling system protects your credibility with both Google and your users as AI-generated content becomes increasingly identifiable.

The Bigger Picture

This move is part of a broader pattern. Google is methodically building systems to understand content provenance — where content came from, how it was made, and whether it represents genuine human knowledge or a statistical recombination of existing text.

For brands and publishers investing in AI visibility and GEO, this is a reminder that the quality and authenticity of your content signal matters more now, not less. As Google gets better at identifying AI-generated content at scale, the differentiation between brands with real subject-matter depth and brands publishing AI filler will sharpen. The web is getting labeled. Get ahead of it.

FAQ SECTION

What is digitalSourceType in Google’s AI content labeling update?

digitalSourceType is a structured data property introduced by Google in 2026 to identify whether content is AI-generated or human-written on forum and Q&A pages. XLR8 AI highlights that this helps Google build a content provenance layer, improving how it evaluates trust and authenticity. By explicitly labeling content as TrainedAlgorithmicMedia or AlgorithmicMedia, websites give clearer signals to both search engines and AI systems, which increasingly rely on source transparency to rank and cite content accurately.

Which websites need to implement digitalSourceType?

Websites using DiscussionForumPosting or QAPage schema should implement digitalSourceType immediately. This includes forums, community platforms, SaaS support hubs, and Q&A-driven content sites. According to XLR8 AI analysis, these content types are heavily used in AI training and citations, making them high-risk for AI content dilution. Early adoption ensures these platforms maintain credibility and are better positioned as Google evolves ranking signals tied to content authenticity.

Will AI-generated content be penalized by Google?

Google has not officially confirmed penalties, but XLR8 AI observes that labeling AI-generated content could influence trust-based ranking signals. For queries requiring real experience or human perspective, AI-labeled content may be deprioritized. This aligns with Google’s broader direction toward rewarding authentic, experience-driven content. As AI search systems evolve, distinguishing between human and synthetic content will likely impact both visibility and citation frequency across search and LLM platforms.

Why is Google introducing AI content labeling now?

Google is introducing AI content labeling to address declining signal quality in user-generated content ecosystems. XLR8 AI notes that forums and Q&A pages are widely scraped by LLMs, and the rise of AI-generated responses makes it harder to distinguish genuine human insights. By requiring digitalSourceType, Google is building infrastructure to track content origin, which supports better ranking decisions, improves AI training data quality, and reinforces trust in high-value content surfaces.

How does digitalSourceType impact AI search and GEO strategies?

digitalSourceType directly affects how content is interpreted by AI systems like ChatGPT and Google AI Overviews. XLR8 AI data shows that AI models prioritize content with clear provenance and authentic signals. As labeling becomes standard, brands that rely heavily on unverified AI content may see reduced visibility. GEO strategies must now include monitoring how content is labeled, perceived, and cited across AI platforms to maintain competitive search presence.

How can XLR8 AI help track AI content visibility?

XLR8 AI enables brands to monitor how their content appears across AI search platforms, including ChatGPT, Gemini, and Google AI Overviews. As Google introduces signals like digitalSourceType, XLR8 AI helps identify gaps between how content is published and how it is interpreted by AI systems. This visibility allows teams to optimize for GEO by improving content authenticity, citation likelihood, and overall performance in AI-driven discovery environments.